With H200s set to flow into China, Groq is reportedly set to follow — Nvidia is allegedly preparing a custom version of inferencing chip to penetrate region

Even Nvidia is looking to older products amid the AI-induced shortages.

Get 3DTested's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

The silicon silk road to China is open once again: Beijing has given full approval for Nvidia to sell its H200 last-generation GPUs in the region. This follows months of back-and-forth talks between the U.S. Government, Nvidia, and China, and though the Trump administration approved Nvidia sales, the Chinese government still needed to give the final nod.

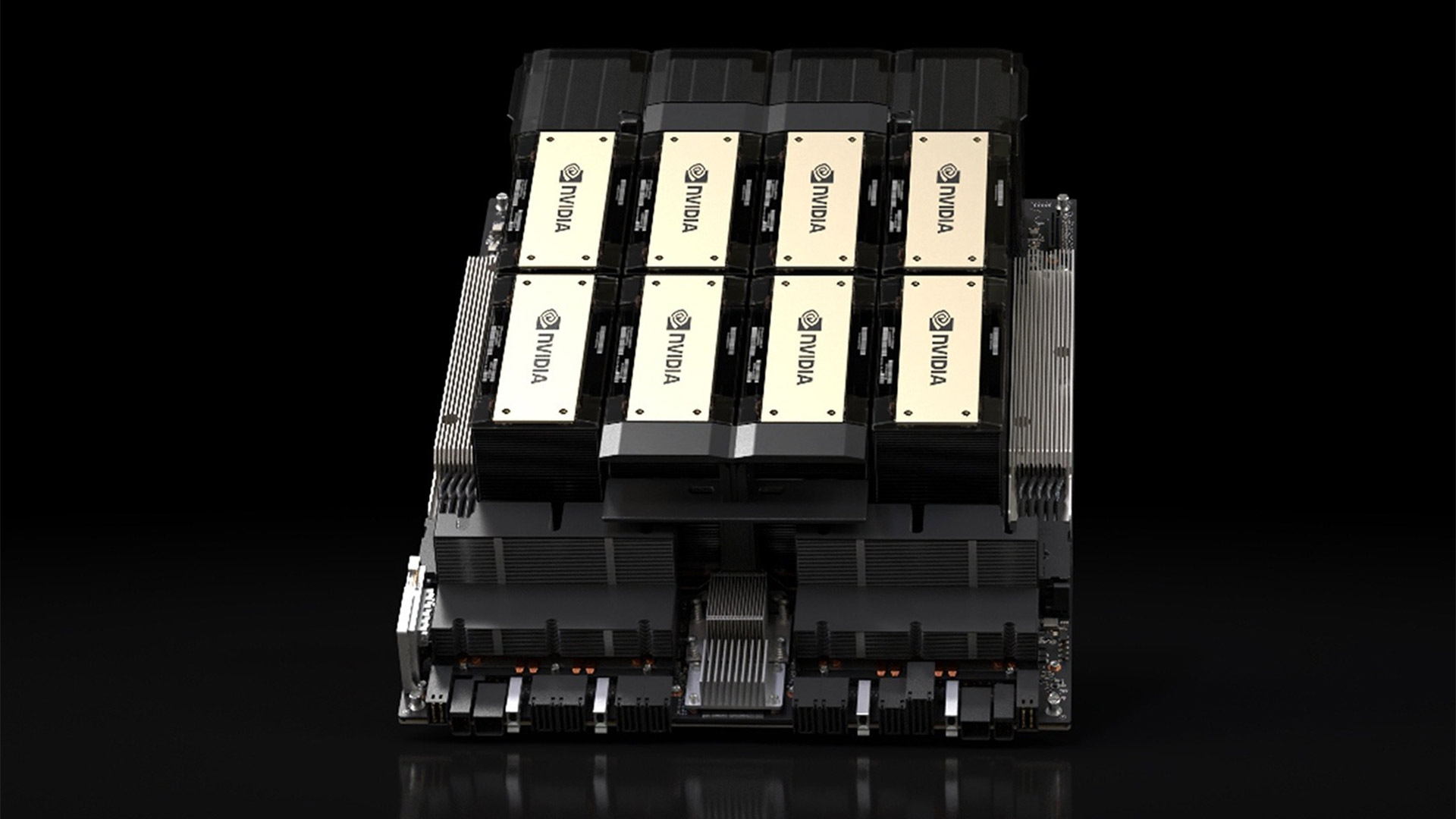

Now that it has it, Nvidia is gearing up for a big sales pitch to Chinese companies, Reuters reports, and not just for its H200 GPU. CEO Jensen Huang has said it has already received orders for the H200 chips, but it's arguably the Groq custom AI inferencing hardware that Nvidia is more keen to sell. After making a $14 billion deal with Groq, which develops custom AI ASICs — known as Language Processing Units (LPUs) — Nvidia wants to make a return on its investment. Can the company inject itself into the burgeoning market for Chinese inferencing chips?

An oldie but a goodie

On the enterprise front, Nvidia had to restart the production line for its H200 chips, having spent much of the past 12 months pushing its successor, the B300, based on their newer Blackwell architecture, the same one inside RTX 50-series GPUs. The company ultimately wound down H200 production because of the regulatory hurdles it was forced to jump through, both at home and in China.

Article continues belowWith China prioritizing its domestic industry and hesitant to let Nvidia hardware build a monopoly, it didn't seem like H200 could make its way to the region in any significant quantities.

H200 may be last-generation, but that doesn't mean it's incapable. It's much more powerful than the H20 AI GPUs Nvidia previously sold to China to curb its access to high-end hardware — around six times as much. Now that H200 is back on the menu, Nvidia has reportedly received a lot of interest from Chinese companies for it.

CEO Jensen Huang said earlier this week that Nvidia had received licenses to supply "many customers in China" and had received orders from a number of companies. To cater to them, Nvidia was restarting the H200 production line, with Huang himself saying that the "supply chain is getting fired up."

This will be exciting news for Nvidia investors, as Nvidia reportedly didn't include the potential revenue from selling H200 to China in its suggested $1 trillion revenue plan for the company in 2027.

But Nvidia won't make the largest returns on these sales. Although the Trump administration has approved some sales of H200 chips to China, it comes with a 25% revenue share with the U.S. Government. Nvidia will have to pay the fee when the chips arrive in the U.S. From their fabrication facilities for approval, before being re-exported.

But H200 isn't the only new(ish) hardware that Nvidia will use to improve its market position in China. The company is also adapting its Groq custom inferencing chips for the market, too.

Groq to take on China

Groq is the name of the AI inferencing hardware company Nvidia acquired in late-2025 — not to be confused with Elon Musk and xAI's Grok. Technically, Nvidia just acquired a license to use the Groq technology and hired on all its top staff, but that's an acquisition in every way but name, and it conveniently gets around any anti-trust investigations or regulatory approval.

These LPUs are very efficient and capable at handling inferencing, day-to-day AI workloads. Although Nvidia's GPUs are likely to remain the gold standard for training large language models for some time to come, the inferencing market is much more competitive, and especially so in China. Hence why even the mighty Nvidia needed to look outside its own organization to find the competitive edge it needed.

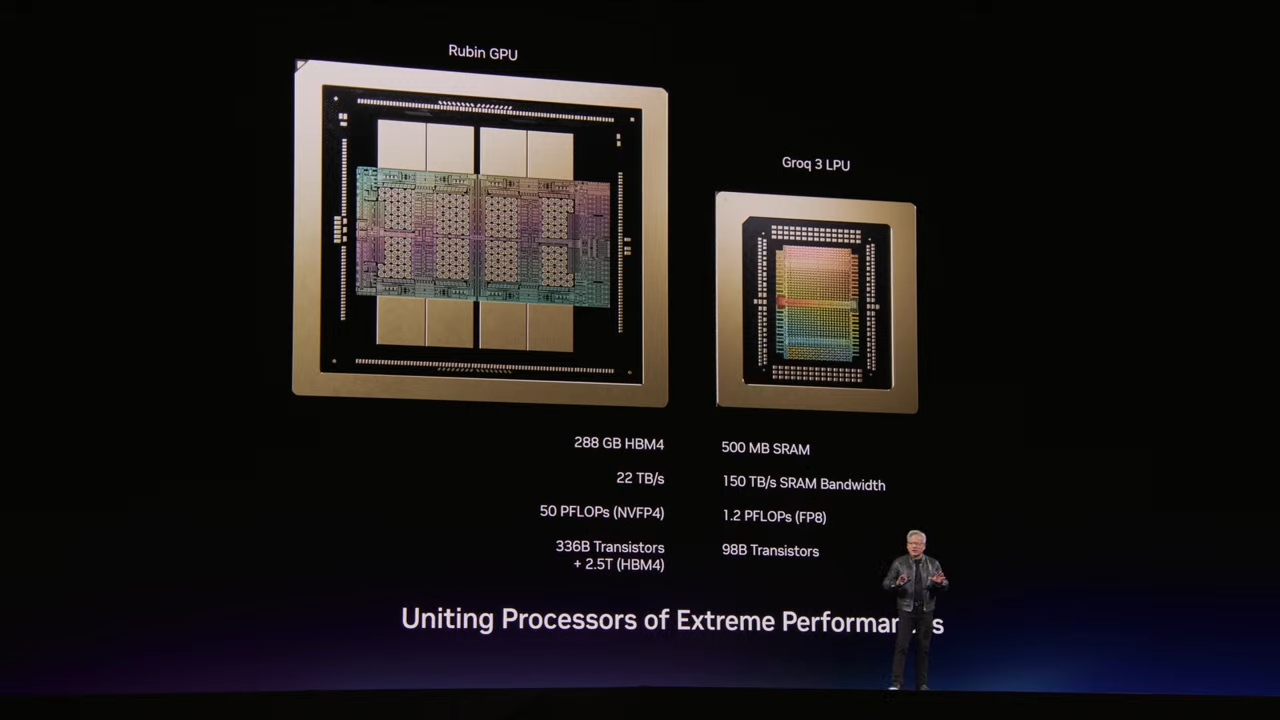

Developed by company founder and ex-Google TPU designer Jonathan Ross, Groq's LPUs are being built into Nvidia's Vera Rubin server stacks to deliver a comprehensive AI platform that keeps Nvidia at the core, even when it's the licensed technology that's doing the work.

As with its GPUs, Nvidia looks set to adapt its Groq LPUs for China. It doesn't have to downgrade their performance, according to Reuters' sources, but it can't sell Vera Rubin to China, so it may need to find a way to sell the LPUs separately, or bundle them with older GPU technology. Using Groq would keep CUDA relevant in the region, deepening its foothold on developers.

Whatever configuration Nvidia ends up with, the company will need to convince Chinese companies to adopt it. Where they don't have homegrown training hardware, Chinese tech giants like Baidu and Huawei are developing their own inferencing chips and have received substantial backing from the Chinese ruling party to accelerate their development. And it's pushing to develop advanced chipmaking tools domestically, too.

There's also stiff competition from the likes of Meta, which might be working on the pipe-dream-potential of superintelligent AI, but it's also building its own MTIA inferencing chips, with a long road ahead for future releases. And Amazon and Google have their own custom TPUs, which are very competitive within the field.

With that in mind, it's no wonder Nvidia is rushing ahead to take advantage of its latest acqui-hire with Groq. The LPUs designed for the Chinese market are reportedly targeting a May release. With H200s also set to flow into China, Nvidia's market share within the region will undoubtedly rise from its nadir of "0%", a figure Huang touted last fall.

3DTested has reached out to Nvidia for additional comment.