How Nvidia's $20 billion Groq 3 LPU deal reshapes the Nvidia Vera Rubin Platform — Samsung 4nm process serves as bedrock for SRAM-based AI accelerator chip

The Groq 3 LPU arrives as Rubin CPX appears to exit the roadmap.

Get 3DTested's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

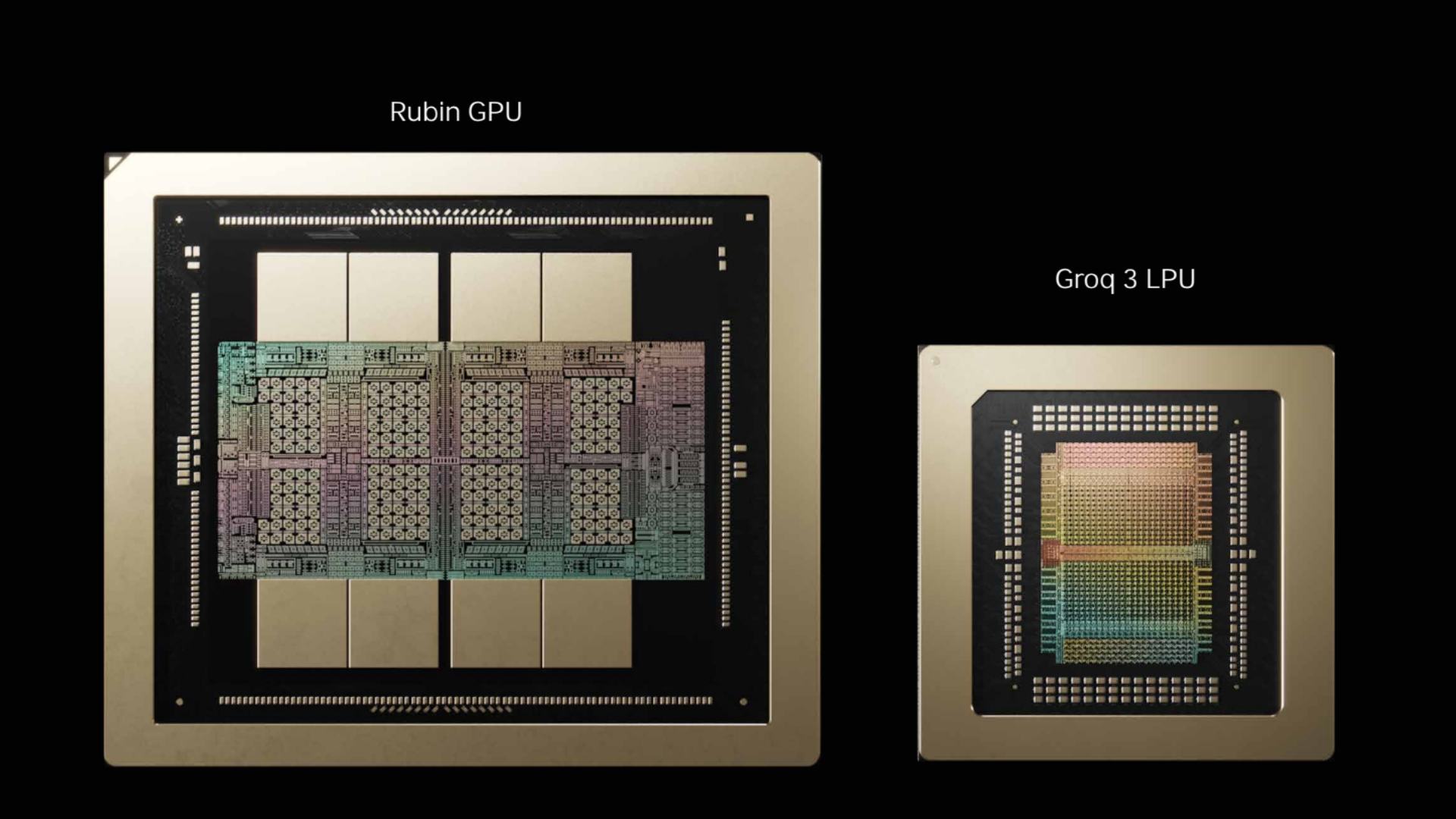

Nvidia unveiled the Groq 3 language processing unit at GTC 2026 in San Jose on Monday, marking the first chip to emerge from its $20 billion licensing and talent deal with AI inference startup Groq, which was struck on Christmas Eve last year. The SRAM-based inference accelerator slots into the Vera Rubin platform as a dedicated decode-phase co-processor, and Nvidia plans to ship it in Q3 2026, manufactured by Samsung on a 4nm process. It is the company's first rack-scale product built around non-GPU silicon — and its arrival has already displaced a homegrown Nvidia chip from the roadmap.

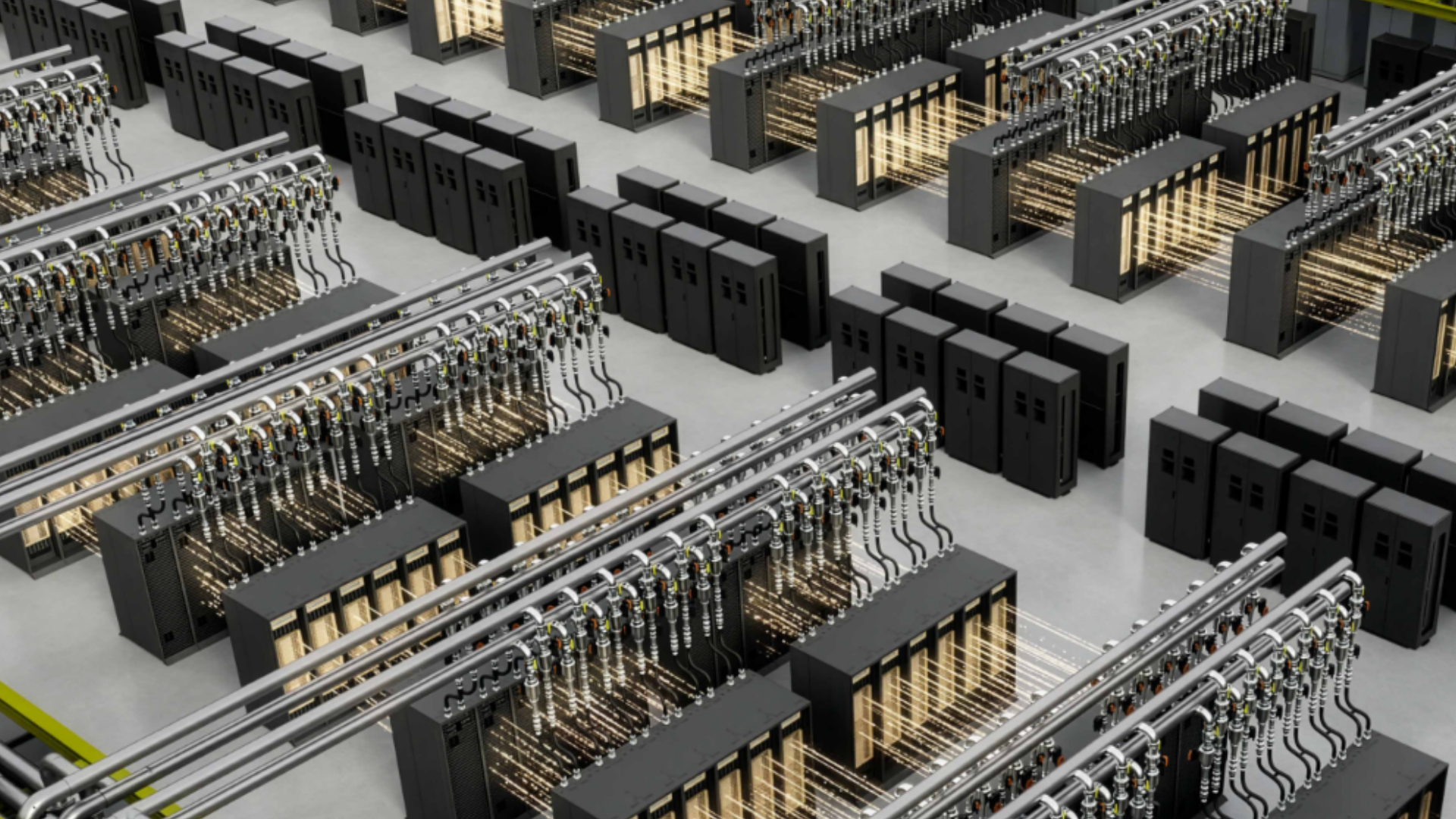

The LP30 chip at the heart of the Groq 3 LPX rack carries 512 MB of on-chip SRAM per die, delivering 150 TB/s of memory bandwidth. That figure dwarfs the 22 TB/s available from the 288 GB of HBM4 on each Rubin GPU. A full LPX rack houses 256 LPUs for a total of 128GB of SRAM and 40 PB/s of aggregate bandwidth. Nvidia claims the LPX rack, paired with a Vera Rubin NVL72, delivers 35 times higher throughput per megawatt than Blackwell NVL72 alone for trillion-parameter models, at a target price point of $45 per million tokens.

Groq 3 and Vera Rubin

Rubin GPUs handle the compute-intensive prefill phase of a query, processing long input contexts, while Groq LPUs take over the decode phase, generating output tokens at low latency. Nvidia's Dynamo orchestration platform manages the split across heterogeneous hardware, distributing workloads based on batch size and parallelism requirements.

Article continues belowThe original, pre-Nvidia Groq LPU design used a fixed Very Long Instruction Word (VLIW) pipeline and large on-chip SRAM pools, with the compiler pre-scheduling the entire execution path at compile time, which meant deterministic latency with no cache misses or stalls. These chips also demonstrated raw single-user token rates in the thousands per second, but the architecture's weakness was always capacity. At 230MB of SRAM per chip in prior generations, fitting even medium-sized models required high chip counts, and the architecture was initially designed for convolutional neural networks.

The Groq LP30 addresses some of these limitations with 512 MB of SRAM per die and 1.23 FP8 PFLOPS of compute capability. Samsung has ramped production from roughly 9,000 wafers to about 15,000 wafers as output shifts from samples to commercial manufacturing, with AWS announcing at GTC that it will deploy Groq 3 LPUs alongside more than one million Nvidia GPUs as part of an expanded partnership.

Beyond the LP30, a future LP35 will add NVFP4 support, aligning with the Rubin Ultra generation, and an LP40 is planned for the Feynman architecture cycle after that.

Rubin CPX axed?

One conspicuous absence from GTC was the Rubin CPX, a GDDR7-based inference accelerator announced in September 2025 as part of the Vera Rubin platform; it was absent from all keynote slides and received no stage time. It appears — though not officially confirmed — that the CPX has been removed from Nvidia’s roadmap entirely, replaced in the platform hierarchy by Groq 3 LPX.

Rubin CPX was designed to use cheaper, more available GDDR7 memory to accelerate the context phase of inference at lower power. But the Groq LPU offers higher bandwidth without requiring large quantities of any external memory, which is ideal in a market where HBM supply remains constrained, and GDDR7 production is still scaling. Off-roadmap parts could still ship to customers who have already invested in CPX software optimization, but there’s a clear shift in priorities at Nvidia.

There’s also an uncanny comparison between this and the Mellanox acquisition in 2019. That ended up turning Nvidia’s NVLink and InfiniBand technologies into foundational infrastructure for AI clusters. Groq appears to be following a similar trajectory, whereby start-up tech is absorbed into the platform as a permanent new architectural layer.

Inference chip consolidation

Nvidia's Groq deal is the largest in a wave of inference-focused acquisitions that swept through the semiconductor industry in 2025. In June, AMD acquired the engineering team from Untether AI, a RISC-V inference chip developer, after the startup shut down, and Nvidia itself paid over $900 million for networking startup Enfabrica's team and IP in September. Meta acquired custom-chip startup Rivos in October, and Intel attempted to buy SambaNova for a reported $1.6 billion, but the talks collapsed; the two companies settled on a $350 million investment and multi-year partnership last month instead.

There’s a consistent pattern here, where independent inference chip startups are being absorbed by large incumbents, and the economics of competing independently against Nvidia's CUDA ecosystem have proven unsustainable regardless of technical merit. Groq itself was targeting $500 million in revenue for the fiscal year 2025, but even that wasn’t enough to sustain independence. Bernstein analyst Stacy Rasgon noted in a research report that the deal's non-exclusive licensing structure may keep "the fiction of competition alive" while effectively neutralizing a rival.

Hyperscaler custom silicon

While startups consolidate into incumbents, the hyperscalers are building their own inference hardware at an accelerating pace.

Meta announced four successive MTIA chip generations on March 11, all developed in partnership with Broadcom: the MTIA 300 (already in production for ranking and recommendation training), MTIA 400 (completing lab testing), MTIA 450, and MTIA 500, with the latter two targeted at generative AI inference and scheduled for mass deployment in 2027. The company has already deployed hundreds of thousands of earlier MTIA chips for inference across its apps. From MTIA 300 to 500, HBM bandwidth increases 4.5 times, and compute FLOPS increase 25 times.

Google's Ironwood TPU v7 delivers 4,614 TFLOPS per chip with 192 GB of HBM per die, scaling to 42.5 exaflops in 9,216-chip pods. AWS continues developing Trainium and Inferentia, though internal data reported in 2024 showed Trainium at just 0.5% of Nvidia GPU usage within AWS and Inferentia at 2.7%, suggesting adoption has lagged.

Spending figures also demonstrate this diversification, with a Futurum Group survey from November 2025 finding that XPU accelerators are expected to lead data center compute spending growth at 22% in 2026, outpacing GPUs at 19% and CPUs at 14%. Meanwhile, TrendForce projects custom ASIC shipments from cloud providers growing 44.6% in 2026, compared to 16.1% growth for GPU shipments.

Nvidia's response is to make sure its platform includes non-GPU silicon before someone else's does, and the Groq 3 LPU is the product of that. Meanwhile, the future of Rubin CPX appears uncertain, at least for now.