ISSCC 2026: AMD discloses how the Instinct MI355X doubled per-CU throughput despite lower compute unit count — 'We are actually matching the performance of the more expensive and complex GB200'

AMD's engineers traded compute unit count for per-CU throughput

Get 3DTested's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

AMD’s Instinct MI350X-series AI GPU, with its cutting-edge CDNA 4 architecture, was released way back in June, but the recent ISSCC symposium in San Francisco is where we got a first deep dive into the engineering behind it.

Taking to the stage on February 16, AMD fellow design engineer Ramasamy Adaikkalavan talked through how AMD managed to fit nearly double the compute throughput into the same die area as its predecessor, and why the MI355X has fewer compute units than the GPU it replaces.

Fewer compute units, more throughput

Each Accelerator Complex Die (XCD) in the MI355X contains 32 active compute units, down from 38 in the MI300X, but AMD doubled per-CU FP8 throughput in the process — from 4,096 FLOPS per clock to 8,192 — by redesigning the matrix execution hardware rather than simply adding more of it.

The company also adopted what it called a selective sharing strategy, whereby instead of building entirely dedicated hardware for each numeric format (which is expensive in die area) or sharing all hardware across every format (cheaper, but inefficient), AMD analyzed each arithmetic component individually and shared only where the power penalty was acceptable. As such, the MI355X delivers five petaflops of FP8 compute, a 1.9x improvement over the MI300X, while fitting in the same 110 mm² die area per Accelerator Complex Die (XCD).

"The 32-CU count is an intentional choice," says Adaikkalavan. "It maintains a clean power-of-two structure, which simplifies tensor tiling and workload partitioning for the AI kernels." A power-of-two CU count makes it easier for AI kernels to divide work evenly across the hardware, reducing the tail effect, which is the performance penalty that occurs when the last batch of work doesn't fill the available compute resources.

The XCD also gained two additional metal routing layers with the jump from TSMC's N5 to N3P, increasing the metal stack from 15 to 17 layers and addressing a growing back-end-of-line problem. "Even though Moore's law is slowing down, we still get some good linear scaling in logic density," Adaikkalavan said. "However, routing density is not keeping up." At 3nm, wiring now accounts for a larger fraction of total switching power than it did in prior generations, so AMD responded with careful floorplan optimization and ML-based placement algorithms to minimize wire length on active signal nets.

Combined with custom activity-based clock gating cells, which detect when a data stream of repeated zeros or ones would otherwise unnecessarily toggle the clock and shut it off accordingly, AMD targeted a greater than 30% reduction in process-neutral switching capacitance per operation compared to the MI300X's XCDs.

I/O die redesign

The MI300X used four separate I/O dies, but AMD has reduced this to two larger dies in the MI355X, which are directly connected to each other. That’s a consolidation that, AMD says, delivers meaningful efficiency gains beyond simply reducing die count.

Fewer die-to-die crossings have enabled AMD to remove the circuitry previously required to handle domain crossings and protocol translations. The freed-up area went into widening the Infinity Fabric data pipeline so that peak HBM bandwidth could be delivered at lower operating voltages and frequencies. AMD's claim of 1.3x better HBM read bandwidth per watt compared to the MI300X follows from this; the raw bandwidth figure increased 1.5x (from 5.3 to 8.0 TB/s), but the efficiency gain came from running the fabric at a less power-hungry operating point.

AMD also targeted roughly 20% lower interconnect power on the IOD through what it described as custom wire engineering, whereby AMD’s engineers tuned segment lengths, optimizing routing patterns, and selecting non-default routing rules. AMD acknowledges that these figures are pre-sukucib ebgubeerubg estimates, however.

Meanwhile, a bigger, faster Local Data Share (LDS) — an on-chip scratchpad memory inside each compute unit — “improves the utilization of the newly expanded matrix compute unit with extensive on-chip data reuse,” Adaikkalavan explains. The LDS is substantially larger in the MI335X-series compared to the MI300X-series, coming in at 160KB per CU versus 64KB, with double the bandwidth.

During matrix multiply-accumulate operations, the LDS feeds data directly to the matrix compute units, and a larger LDS reduces how often the GPU has to reach out to slower memory tiers to reload operand data. AMD also added a direct LDS load path from the L1 data cache that eliminates intermediate register usage, reducing memory latency for these operations further.

Performance numbers (and caveats)

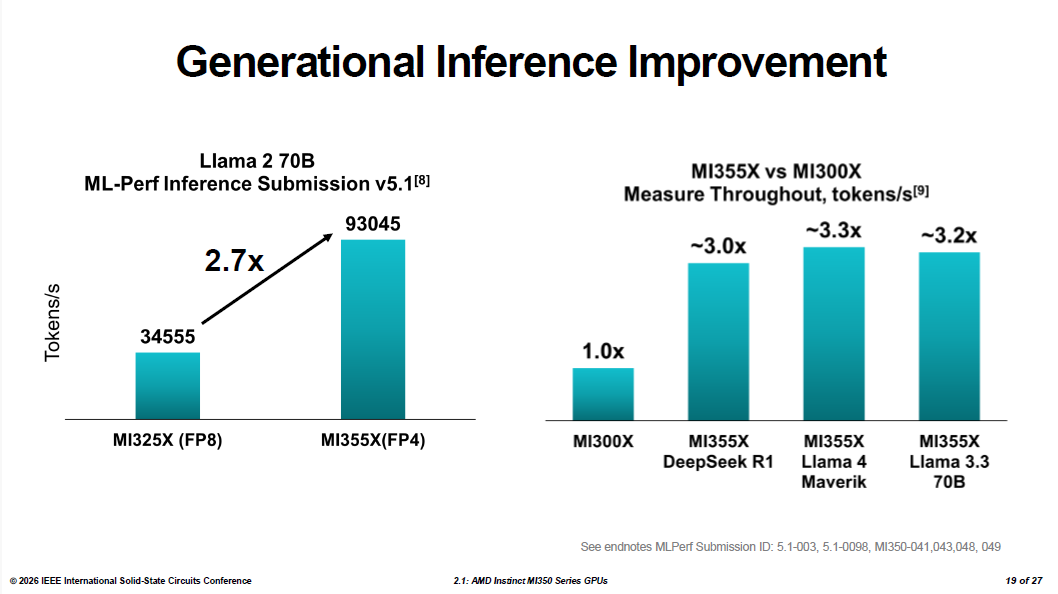

AMD submitted the MI355X to MLPerf Inference v5.1, where it achieved 93,045 tokens per second on the Llama 2 70B benchmark — a 2.7x improvement over the MI325X. In internal throughput comparisons, running FP4 inference against the MI300X's FP8 results, AMD showed roughly a threefold improvement in token generation across DeepSeek R1, Llama 4 Maverick, and Llama 3.3 70B.

It’s worth noting that those figures pit the MI355X's FP4 results against the MI300X's FP8, and the MI300X never supported FP4. So, while this data does demonstrate a generational improvement in practice, it doesn’t isolate hardware from software and data format improvements.

The training comparison against Nvidia has a similar caveat. AMD's data shows the MI355X completing a Llama 2 70B LoRA fine-tuning run in 10.18 minutes, versus 11.15 minutes for the GB200—about 10% faster. AMD's result came from MLPerf Training v5.1 using FP4, while the Nvidia figure is the GB200's last published FP8 score from MLPerf Training v5.0; Nvidia has not submitted a comparable FP4 training result.

Adaikkalavan was candid about what the parity result reflects: "We are actually matching the performance of the more expensive and complex GB200. It tells you a couple of things. One, we have strong hardware, which we always knew. And second, the open software frameworks have made tremendous progress."

AMD's reckoning shows the MI355X carries 288GB of HBM3E against the B200's 192GB, and delivers roughly double the FP64 throughput — 2.1x compared to the B200. For general inference workloads, the two accelerators are at rough parity. The MI355X's larger memory pool its most consistent advantage for running large models without distributing them across multiple GPUs.

Both the MI350X (1,000W TBP, 2,200 MHz) and the flagship MI355X (1,400W TBP, 2,400 MHz) maintain the same physical form factor as the MI300X. AMD built that constraint into the project from the start, designing the entire CDNA 4 generation to function as a drop-in infrastructure upgrade for existing MI300-based servers rather than requiring new rack designs or cooling infrastructure.

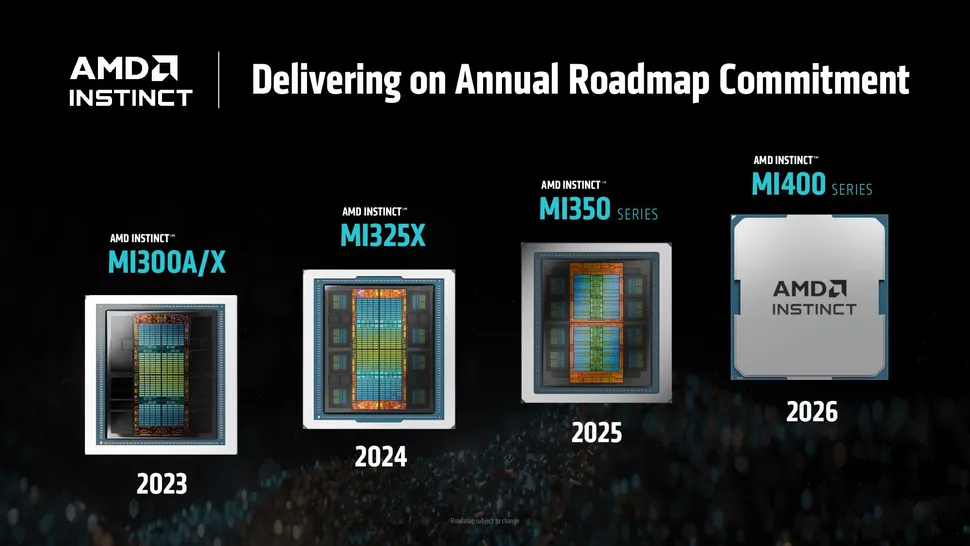

With the MI400-series waiting in the wings, however, the MI350 series will soon play second fiddle. The MI400 is built on TSMC's N2 process, with 432GB of HBM4 and roughly double the compute. AMD continues to promise those chips for the second half of this year. But in a world where every AI FLOP is potentially valuable, both AMD and its customers will likely continue to optimize performance on the MI350 family for some time to come.