Sambanova introduces new AI accelerator, partners with Intel to deploy Xeon CPUs for inferencing and agentic workloads — Sambanova claims SN50 chip is three times more efficient than Nvidia B200

Intel teams up with a new kid on the block.

Get 3DTested's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

This week, Intel and SambaNova entered a multi-year strategic collaboration aimed at building large-scale AI inference infrastructure around Intel Xeon platforms and SambaNova AI accelerators. In addition, SambaNova introduced its SN50 AI processor for agentic inference, and inked a deal with SoftBank to deploy it at the latter's data centers.

SambaNova says its SN50 AI accelerator platform is around five times faster and three times more efficient than Nvidia's B200. Additionally, the company has signed a pact with Intel to offer Xeon-based AI systems to enterprises and governments.

"AI is no longer a contest to build the biggest model," said Rodrigo Liang, co‑founder and CEO of SambaNova. "With the SN50 and our deep collaboration with Intel, the real race is about who can light up entire data centers with AI agents that answer instantly, never stall, and do it at a cost that turns AI from an experiment into the most profitable engine in the cloud."

SN50: Purpose-built for inference

Seeing AI inference as potentially a larger market than AI training, SambaNova developed its SN50 accelerator for inference workloads, rather than training, focusing primarily on low latency for real-time applications such as voice assistants, memory and network bandwidth, and power consumption, rather than on pure compute performance. The dual-chiplet processor is based on SambaNova’s Reconfigurable Data Unit (RDU) architecture and features a three-tier memory subsystem — SRAM, HBM, and DDR5 — designed to keep multiple models resident (for rapid hot-swapping), along with mechanisms to optimize memory usage, which is handy for both memory utilization and power consumption.

The company says that the new SN50 processor delivers five times more compute per accelerator and four times the networking bandwidth compared to its predecessor. In addition, the company claims that the SN50 accelerator offers five times more compute than ‘competing’ offerings (presumably the B200, but we may be wrong), without disclosing which offerings it compares the SN50 to. The company also boasts a threefold lower cost of ownership for inference compared to GPU-based systems.

In line with the latest industry trends, SambaNova positions the SN50 RDU mainly as an ingredient of its SambaRack SN50 rack-scale solution rather than a separate processor, which is how these AI accelerators will be primarily marketed. Each 20 kW SambaRack SN50 packs 16 SN50 RDU processors, and 16 racks can interconnect up to 256 accelerators using a multi-terabyte-per-second fabric. The company stresses that at 20 kW per rack, the SambaRack SN50 operates within existing data center power envelopes and relies on air cooling, eliminating the need for liquid cooling or data center modifications.

A cluster of 256 SN50 accelerators is designed for extremely large models, including configurations exceeding 10 trillion parameters and context windows of more than 10 million tokens. SambaNova positions this capability as essential for reasoning-heavy and multi-model agentic AI workloads, which demand both scale and responsiveness.

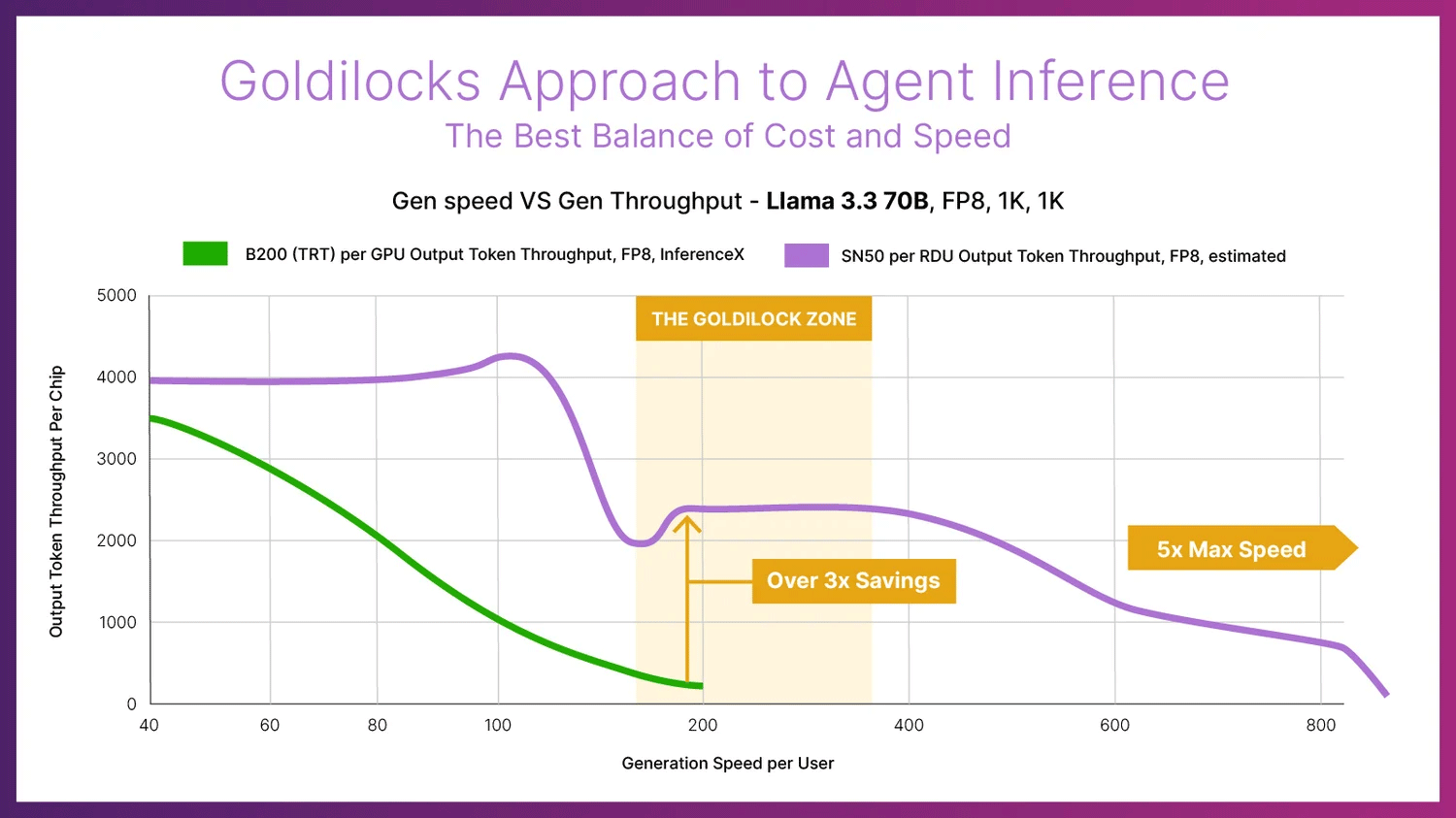

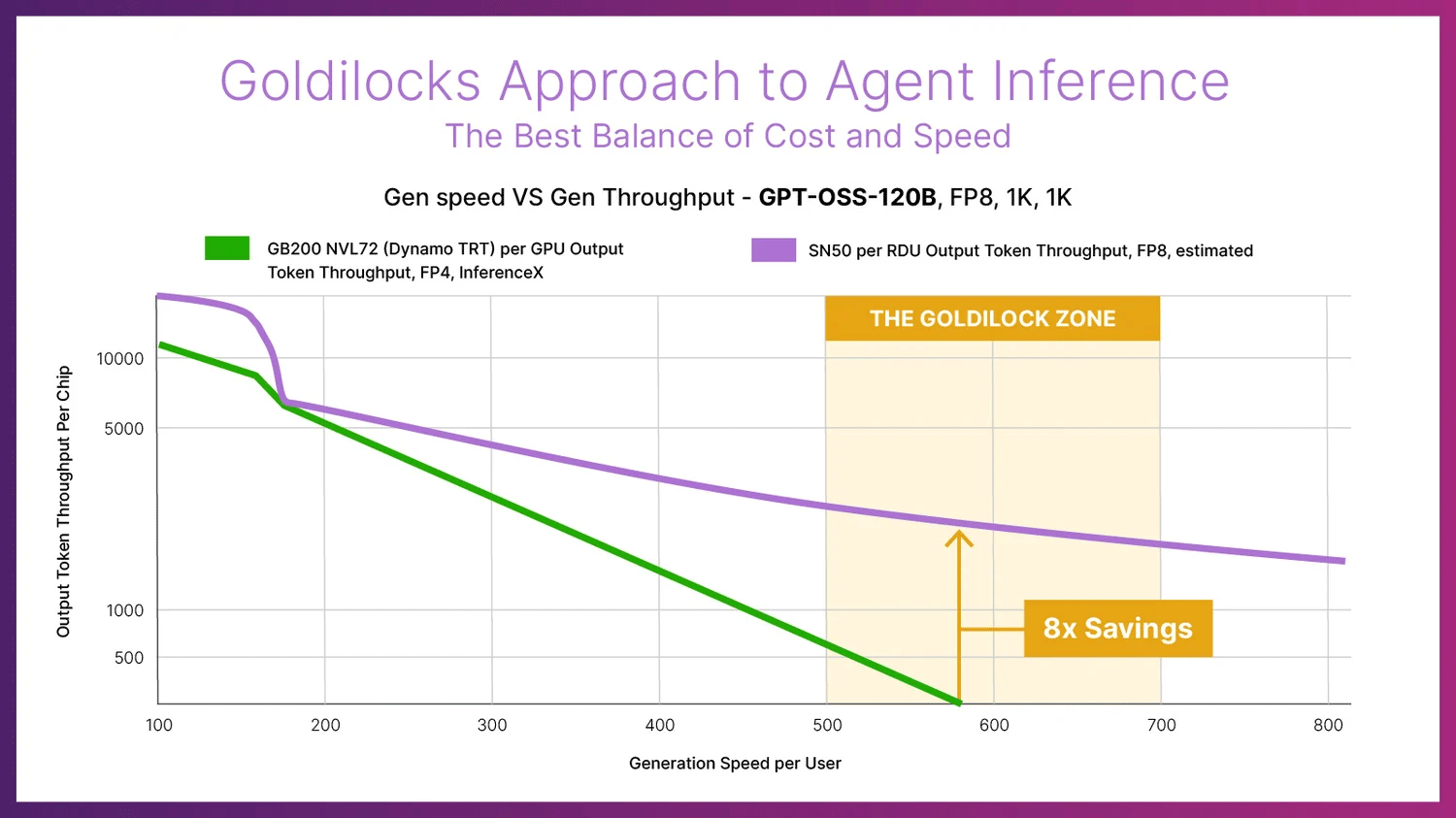

On the performance side of matters, SambaNova cites results from SemiAnalysis’s InferenceX benchmark. When running with FP8 precision, a Llama 3.3 70B model with 1K input and 1K output tokens reportedly achieves 895 tokens per second per user on the SN50, compared to 184 tokens per second per user on Nvidia’s B200. Across a range of configurations, throughput per-RDU is presented as significantly higher than per GPU, with an average advantage of approximately 3X when latency constraints are applied across Llama 70B, GPT-OSS 120B, and DeepSeek 671B, which translates to improved cost-per-token economics.

The SambaRack SN50 systems will be available in the second half of 2026, pricing is unknown.

The pact with Intel

One of the key parts of this week's SambaNova announcements is arguably its pact with Intel. Under the terms of the deal, the companies will offer rack-scale solutions for AI workloads based on Intel Xeon processors and SambaNova AI accelerators for several years. SambaNova does not disclose which CPU powers its SambaRack SN50, but it looks like future racks from the company will be Xeon-based.

The joint effort between Intel and SambaNova targets select applications and types of customers, such as AI inference solutions for AI-native companies, model providers, as well as enterprises and government organizations worldwide. The latter two are Intel's traditional customers, which makes us wonder whether this is a coordinated go-to-market strategy through Intel's enterprise and cloud channels for SambaNova's production-ready inference systems, or a less harmonized approach.

In any case, an x86 Intel Xeon CPU, along with Intel networking technologies, will certainly make SambaRacks more appealing to enterprises and governments.

Notably, Intel emphasized that this agreement with SambaNova complements its existing GPU roadmap rather than replacing it, so the company will continue offering its own GPUs for inference and eventually for training.

Perhaps the most controversial part of the announcement is that Intel Capital is also participating in SambaNova's Series E financing round. SambaNova is chaired by Lip-Bu Tan, who also happens to be the chief executive of Intel. While it is common for companies of Intel's scale to make strategic investments in their smaller ecosystem partners, taking a stake in a business chaired by the investor's own top executive is far from routine.

The deal with SoftBank

A less sensitive, but no less interesting part of the SambaNova announcement is its pact with SoftBank. SoftBank will be the first to roll out SN50 in its next-generation AI data centers in Japan. These data centers will use the accelerator to drive low-latency inference for sovereign and enterprise customers across Asia-Pacific, running open-source and proprietary frontier models with strict performance requirements.

The move expands SoftBank's existing partnership with SambaNova, which already operates SambaCloud in the region. The new clusters based on the SN50 will be standard for SoftBank's new data centers, which effectively makes SambaNova the core inference platform for SoftBank's sovereign AI programs and upcoming large-scale agentic deployments in the region.

"With SN50, we are building an AI inference fabric for Japan that can serve our customers and partners with the speed, resiliency and sovereignty they expect from SoftBank," said Hironobu Tamba, Vice President and Head of the Data Platform Strategy Division of the Technology Unit at SoftBank Corp. "By standardizing on SN50, we gain the ability to deliver world‑class AI services on our own terms — with the performance of the best GPU clusters, but with far better economics and control."

$350 million in Series E funding

Last but not least, SambaNova has secured $350 million in strategic Series E funding to expand manufacturing and cloud capacity from such investors as Vista Equity Partners, Cambium Capital, Intel Capital, and Battery Ventures.

This all positions Sambanova into the AI inferencing market. As training workloads demand the best and fastest processors, inferencing introduces a different set of requirements, with the ongoing Intel partnership set to benefit the company, especially as Agentic AI inferencing workloads demand fast CPUs, in this case, from the likes of Intel.