Amazon invests $50 billion in OpenAI, comitting to 2 gigawatts of Trainium silicon — AWS to become exclusive cloud distributor for Frontier enterprise platform

The deal, part of a $110 billion funding round also backed by Nvidia and SoftBank, commits OpenAI to 2 gigawatts of Amazon's custom Trainium silicon.

Get 3DTested's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Amazon and OpenAI have announced a sweeping multi-year strategic partnership, with Amazon committing $50 billion in investment, AWS securing exclusive third-party distribution rights for OpenAI's enterprise agent platform Frontier, and OpenAI agreeing to consume approximately 2 gigawatts of Amazon's custom Trainium compute capacity, according to a press release.

The investment is part of a $110 billion funding round that values OpenAI at $730 billion pre-money, with Nvidia and SoftBank each contributing $30 billion. The companies will also co-develop custom AI models for Amazon's own products, including Alexa, and jointly build a new stateful agent runtime on Amazon Bedrock.

Split between Trainium 3 and 4

Amazon's $50 billion commitment is structured in two parts: $15 billion upfront, with the remaining $35 billion contingent on conditions that, according to sources cited by The Information, may require OpenAI to complete an IPO or reach an as-yet-undefined "AGI milestone." The deal also expands OpenAI's prior $38 billion AWS compute agreement, struck in November 2025, by an additional $100 billion over eight years.

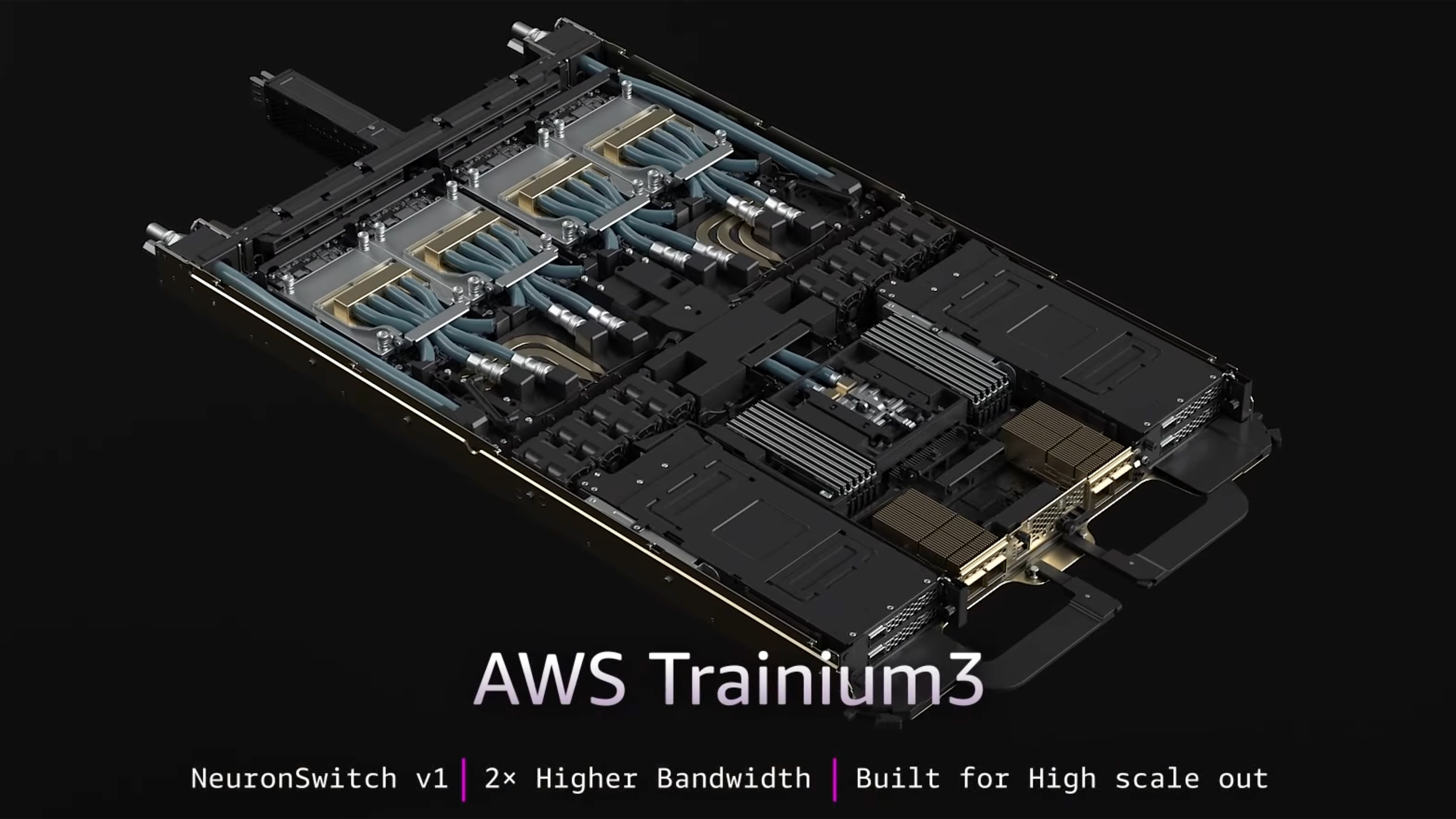

Meanwhile, the 2 gigawatts of Trainium compute will span both the current Trainium 3 generation and the upcoming Trainium 4, which is expected to ship next year. Amazon launched Trainium3 — a 3nm chip delivering four times the performance of its predecessor at 40% better energy efficiency — at its re:Invent conference in December 2025.

AWS has stated that customers can achieve cost savings of 30 to 40% running training and inference workloads on Trainium compared to equivalent Nvidia GPU configurations. Each Trainium3 UltraServer holds 144 chips, and up to 1 million of them can be linked in a single cluster.

Trainium4, meanwhile, is being designed with support for Nvidia's NVLink Fusion interconnect, which allows Trainium4-based systems to interoperate with Nvidia GPUs within the same server rack. Nvidia's CUDA software stack remains the de facto standard, which nearly all large AI workloads are built on, and migrating away from it means rewriting significant portions of a codebase.

Anthropic, in which Amazon has invested at least $8 billion, already trains its Claude models on Trainium at scale — Project Rainier, Amazon's largest dedicated AI data center, houses more than 500,000 Trainium2 chips running Anthropic workloads exclusively. But Anthropic is financially entangled with Amazon. OpenAI is not, which makes its decision to commit 2 gigawatts to Trainium a notably independent validation of the platform.

Let’s not forget that OpenAI also has a separate deal with Broadcom to develop its own custom ASICs, uses Nvidia GPUs through both Azure and AWS, and has committed to AMD chips — so, again, its willingness to stake 2 gigawatts on Trainium is a weighty decision.

Stateful Runtime Environment

Beyond compute commitments, Amazon and OpenAI have announced that they’re co-developing a so-called “Stateful Runtime Environment” (SRE) built on Amazon Bedrock and expected to launch within the next few months.

Most AI agents run on Retrieval-Augmented Generation (RAG) architectures that effectively use a model as an advanced search engine over a set of embedded documents. The issue with this architecture is that the agents can’t retain memory between sessions or carry context across different software tools, and they reset with every new interaction.

SRE, Amazon says, keeps context across calls, retains memory of prior work, integrates with AWS data sources, including S3 storage and IAM identity controls, and allows agents to operate persistently across ongoing projects rather than treating each call as isolated.

Frontier, OpenAI's enterprise agent platform for building and deploying coordinated AI agent teams across business systems, will be distributed exclusively through AWS as its third-party cloud provider, and the SRE on Bedrock is where that infrastructure will sit.

Microsoft-OpenAI partnership ‘strong and central’

Several initial reports following the announcement have framed the deal as AWS displacing Microsoft's position with OpenAI, but that’s not accurate. Azure, per Microsoft, “maintains its exclusive license and access to intellectual property across OpenAI models and products,” with Azure remaining the exclusive cloud provider for OpenAI's stateless API calls.

Microsoft also retains the option to participate in the current funding round, with both companies issuing joint statements affirming the partnership remains "strong and central." In terms of the Amazon-OpenAI deal, AWS gains the enterprise agent deployment side, while stateless API traffic stays on Azure.

AWS holds approximately 30% of the global cloud market heading into this announcement, compared to Azure's roughly 20% and Google Cloud's 13%. Despite that market position, Amazon had been widely characterized as trailing in the generative AI race relative to Microsoft's early OpenAI integration and Google's push with Gemini.

Exclusive distribution rights for Frontier, combined with Trainium's cost positioning, fit Amazon's consistent approach to cloud competition, whereby it prioritizes infrastructure scale and cost efficiency over all else. Amazon is now financially backing both of the leading independent frontier AI labs simultaneously — OpenAI and Anthropic — which positions it as infrastructure for the industry, regardless of which organization's models prove most durable commercially.

This comes with significant financial exposure for Amazon, which is spending approximately $200 billion in capital expenditure in 2026, the majority directed at data centers and AI infrastructure. Its stock had fallen about 8% on the year as investors weighed the return timeline on those outlays. Andy Jassy told CNBC today that he expected OpenAI to be "one of the very big winners" over the long term, but added that Amazon “still has a very strong relationship with Anthropic.” OpenAI CEO Sam Altman said the company now has more than 900 million weekly active users and more than 50 million consumer subscribers, and described an IPO as its "most likely path" given ongoing capital demands back in October.

The FTC issued subpoenas to Amazon, Microsoft, Google, OpenAI, and Anthropic in early 2024 to examine AI partnerships, with particular attention to exclusivity arrangements, and AWS's exclusive rights to distribute Frontier will no doubt give regulators a concrete point of focus. It’s still too early for a legal challenge, but it’s understood that the FTC’s investigation is ongoing, and these new terms could lead to a bite.

However, the $35 billion contingent tranche means a meaningful share of Amazon's headline commitment depends on a trigger — an IPO or AGI breakthrough — that comes with no known or guaranteed timeline. Until one of those conditions is met, the investment stands at $15 billion.