Micron sampling first 256GB SOCAMM2 memory packages to customers — 2TB of RAM per CPU is now in reach of datacenter players

A 33% leap in capacity in six months is an impressive feat.

Get 3DTested's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

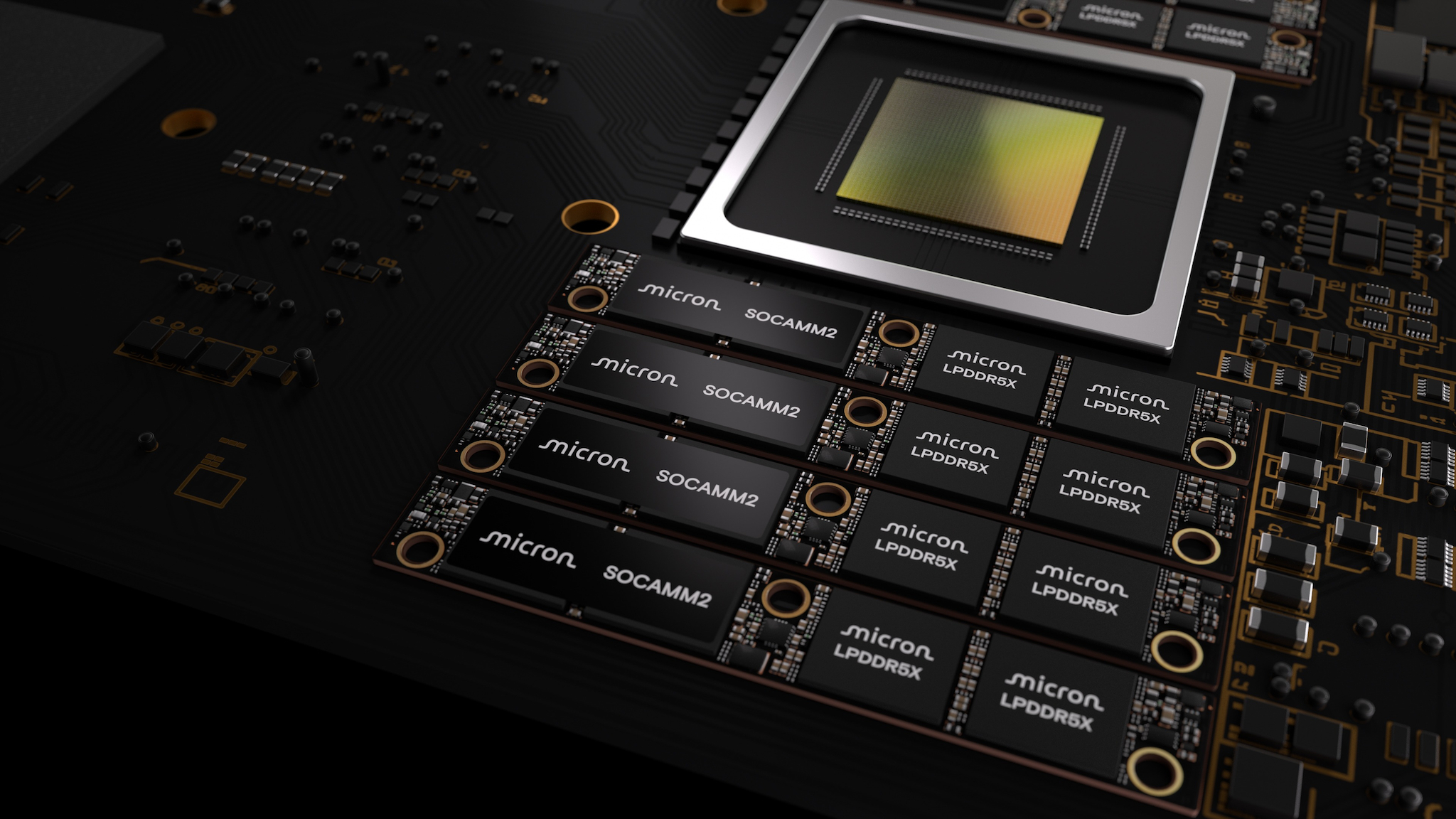

Most of the conversation about speed in AI datacenters revolves around the accelerators themselves, discussing tokens per second and the like. However, the battle for AI performance is fought on multiple fronts, and one of them is in memory capacity and power efficiency. Today, Micron unveiled what look to be the industry's first 256 GB SOCAMM2 units, a sizable step up from the last-best 192 GB modules released just six months ago.

The company says it's now shipping samples to customers, who will most definitely be happy at the notion of having 2TB of memory wired to each CPU. For just one major example, the typical Nvidia NVL72 rack can now carry 72 TB of RAM for its 36 CPUs.

The 33% improved density over previous-generation SOCAMM2 is excellent news on its own, but that's not the only advantage of this form factor. The new modules ought to offer 66% better power efficiency compared to bog-standard RDIMMs, and they're compatible with increasingly popular (and necessary) liquid cooling for AI servers.

According to Micron, the new sticks are the first to employ its 32 Gb (4 GB) LPDDR5X monolithic dies, where "monolithic" means all the memory and relevant circuitry are part of a single die.

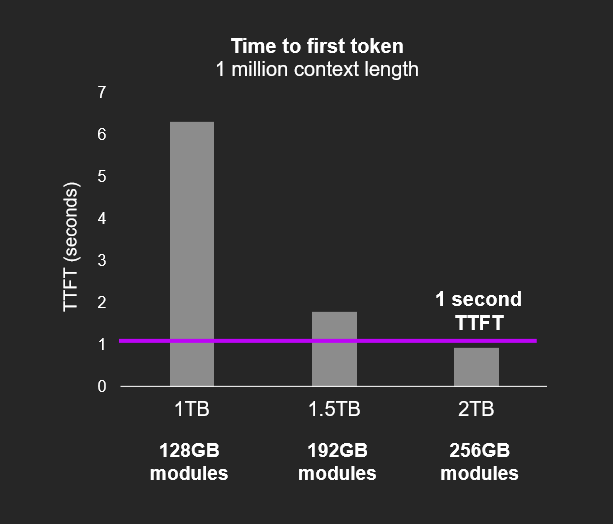

Given the target market for these large SOCAMM2s, the firm touts the real-world performance improvements beyond just density and power efficiency. Having this much RAM available to a single processor lets AI models use much larger context windows. Consequently, it helps reduce the all-important TTFT (Time To First Token), meaning bots start answering your questions quicker.

In an AI future where context is literally everything, every gigabyte of memory closer to the xPUs in a system matters, and Micron's advancement today will doubtless be found in massive AI server installations worldwide as companies allocate hundreds of billions of dollars of capex in the race toward AI supremacy.

The SOCAMM2 form factor is the result of a partnership between Nvidia and memory makers Micron, Samsung, and SK hynix. The SOCAMM standard was originally designed by Nvidia, but the accelerator mogul reportedly had trouble getting the modules to operate without overheating on high-density servers. CEO Jensen Huang wisely teamed up with the folks who make computer memory for a living, resulting in SOCAMM2s with growing density and lower power consumption.

Get 3DTested's best news and in-depth reviews, straight to your inbox.

Follow 3DTested on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

-

Li Ken-un Reply

Dell developed CAMM: https://www.3dtested.com/pc-components/motherboards/what-is-camm2M0rtis said:a SOCAMM format for laptop RAM that was developed by Dell? -

Jame5 CAMM2 and SOCAMM2 honestly are great technologies. One is hampered by lack of adoption, the other is hampered (from general availability) by the AI of it all.Reply