Nvidia Groq 3 LPU and Groq LPX racks join Rubin platform at GTC — SRAM-packed accelerator boosts 'every layer of the AI model on every token'

Groq tech readies Rubin for the multi-agent system frontier

Get 3DTested's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Nvidia's Vera Rubin platform is poised to massively power up the next generation of AI data centers, or "factories," as CEO Jensen Huang calls them, when those systems start arriving later this year. Today, during his GTC keynote, Huang revealed how Nvidia is using the IP it acquired from Groq last year to expand Rubin's capabilities. The Rubin platform now includes a new chip, the Nvidia Groq 3 LPU, an inference accelerator that bolsters these systems' ability to deliver tokens in volume and at low latency for high interactivity at the leading edge of AI models.

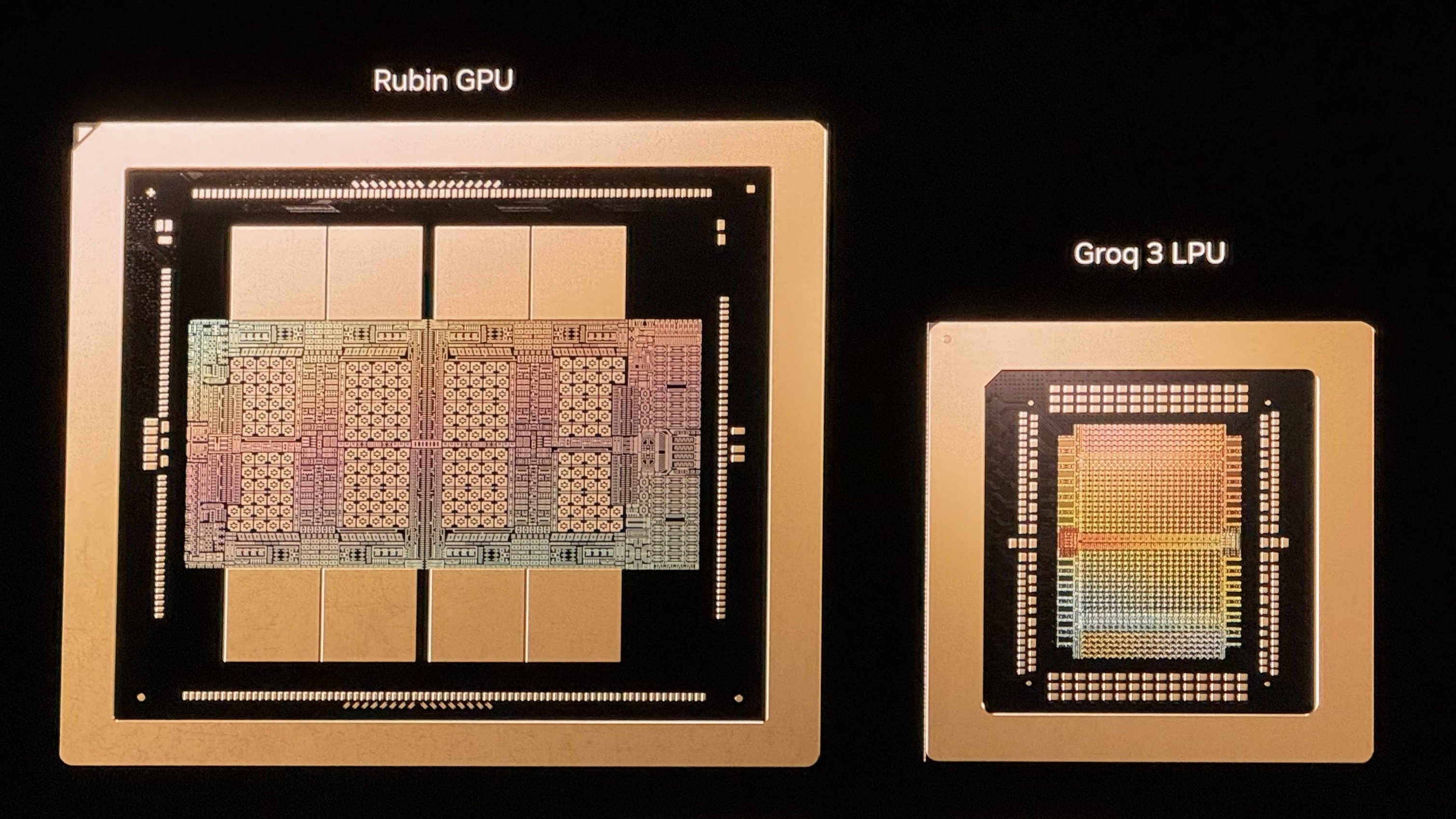

Recall that the Rubin platform already includes six chips from which Nvidia builds up rack-scale systems and scales them out into AI factories: the Rubin GPU itself, the Vera CPU, NVLink 6 scale-up switches, the ConnectX 9 smart NIC, the Bluefield 4 data processing unit, and the Spectrum-X scale-out switch with co-packaged optics. The Groq 3 LPU becomes another building block for Rubin at scale.

Unlike most AI accelerators, which rely on HBM as their working memory tier, each Groq 3 LPU incorporates 500 MB of SRAM, the same memory used for ultra-high-speed caches on CPUs and GPUs. That’s paltry compared to the vastly more capacious 288GB of HBM4 on each Rubin GPU, but as you would expect, that SRAM delivers 150 TB/s of bandwidth, far more than the 22 TB/s of that same HBM. For bandwidth-sensitive AI decode operations, the massive bandwidth boost of the Groq 3 chip offers tantalizing benefits for inference applications.

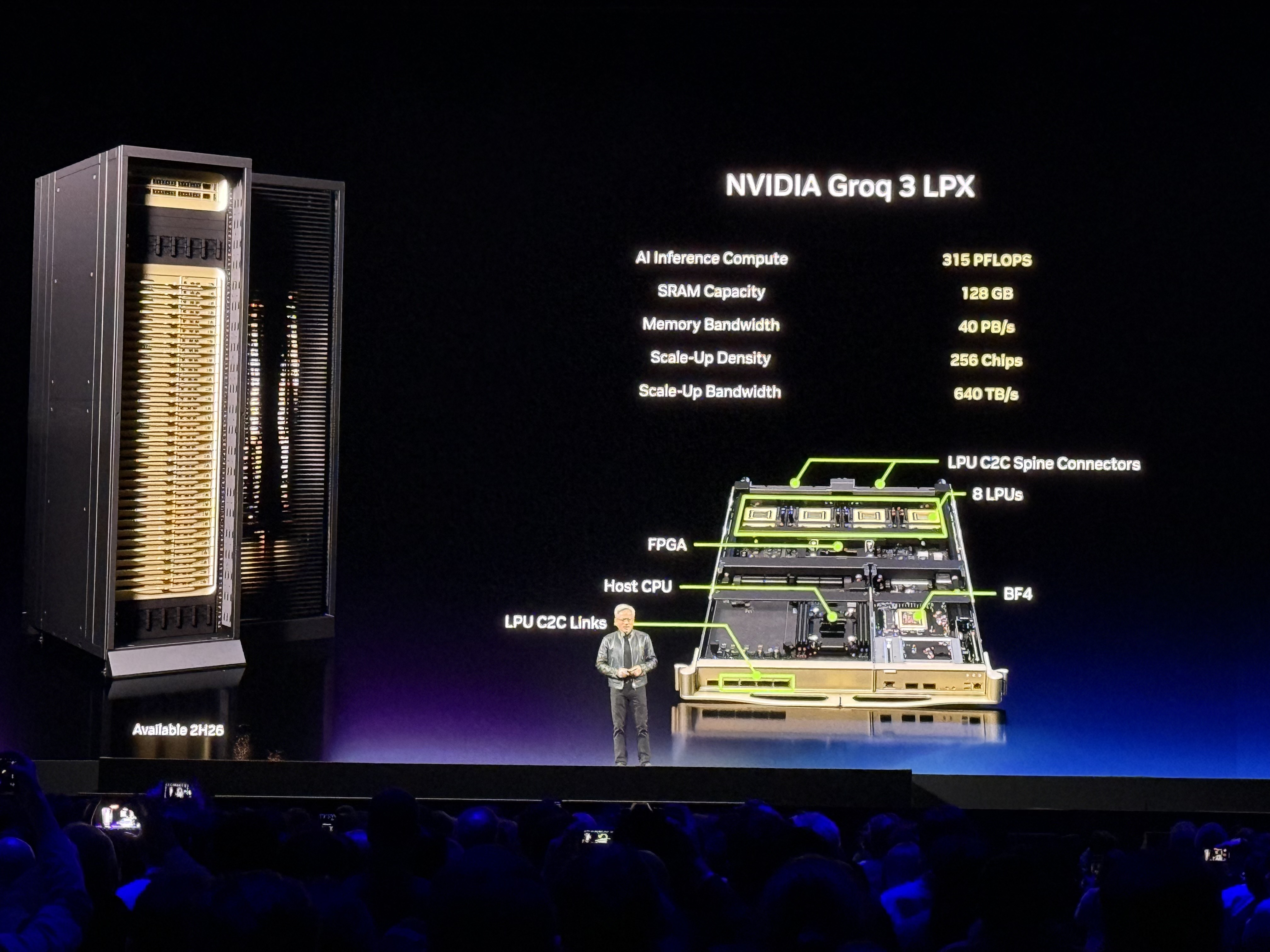

Article continues belowIn turn, Nvidia will build up Groq 3 LPX racks comprising 256 Groq 3 LPUs. That rack offers 128GB of SRAM with 40 PB/s of bandwidth for inference acceleration, and it joins those chips together with a dedicated scale-up interface of 640 TB/s per rack.

Nvidia envisions Groq LPX as a co-processor for Rubin that will boost decode performance at “every layer of the AI model on every token,” according to Nvidia hyperscale VP Ian Buck, and it positions Rubin to serve the next frontier of AI: multi-agent systems that need to deliver interactive performance while inferencing models of trillions of parameters with context windows of millions of tokens.

As the AI agents in those multi-agent systems begin talking more and more to other AIs rather than humans looking at chatbot windows, the frontier for responsiveness requirements also shifts. What might seem like a reasonable rate of tokens generated per second for a human is glacial for an AI agent. In the future of multi-agent systems that Buck describes, the combination of Rubin GPUs and Groq LPUs moves us from a world where 100 tokens per second is a reasonable throughput to one of 1500 TPS or more for AI agent intercommunication.

The addition of the Groq 3 LPU to the Rubin arsenal could help the platform fend off challengers in the low-latency inference frontier. Cerebras, whose wafer-scale engines fuse massive amounts of SRAM and compute for low-latency inference with advanced models, has frequently needled Nvidia regarding the perceived disadvantages of its GPUs for that purpose, and customers as large as OpenAI have signed up for Cerebras capacity to serve some of their state-of-the-art models with the favorable latency characteristics of that platform.

Get 3DTested's best news and in-depth reviews, straight to your inbox.

Buck also hinted that the Groq 3 LPU might lead to a reduced role for the Rubin CPX inference accelerator, saying that the company is currently focused on integrating the Groq 3 LPX rack with Rubin. While he didn’t offer more details, that focus shift would make sense in today’s memory-constricted world, since the two chips are meant to offer similar enhancements for inference performance and the Groq LPU doesn't require the large amount of GDDR7 memory that each Rubin CPX module does.

We’re on the ground at GTC this week, and we’ll be exploring what the fusion of Groq and Nvidia IP means for the future of AI inference through conversations and sessions at the event. Stay tuned.

Follow 3DTested on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.