AMD's Enterprise CPU and GPU roadmap: Venice, Verano, Zen 6, Helios, and CDNA

We take an in-depth look at AMD's 2026 and 2027 roadmaps.

Get 3DTested's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

AMD's position in the data center market has changed drastically in recent years: The company transformed from being an underdog, supplying less than 1% of server CPUs in 2017, to commanding nearly 29% of the server CPU market as of late 2025.

After increasing shipments of processors for data centers by several orders of magnitude in less than 10 years, AMD is changing the way it approaches the market in a bid to continue expansion. On the AI front, AMD is switching to an annual cadence of new product releases, and the company is doing the same for traditional data center CPUs.

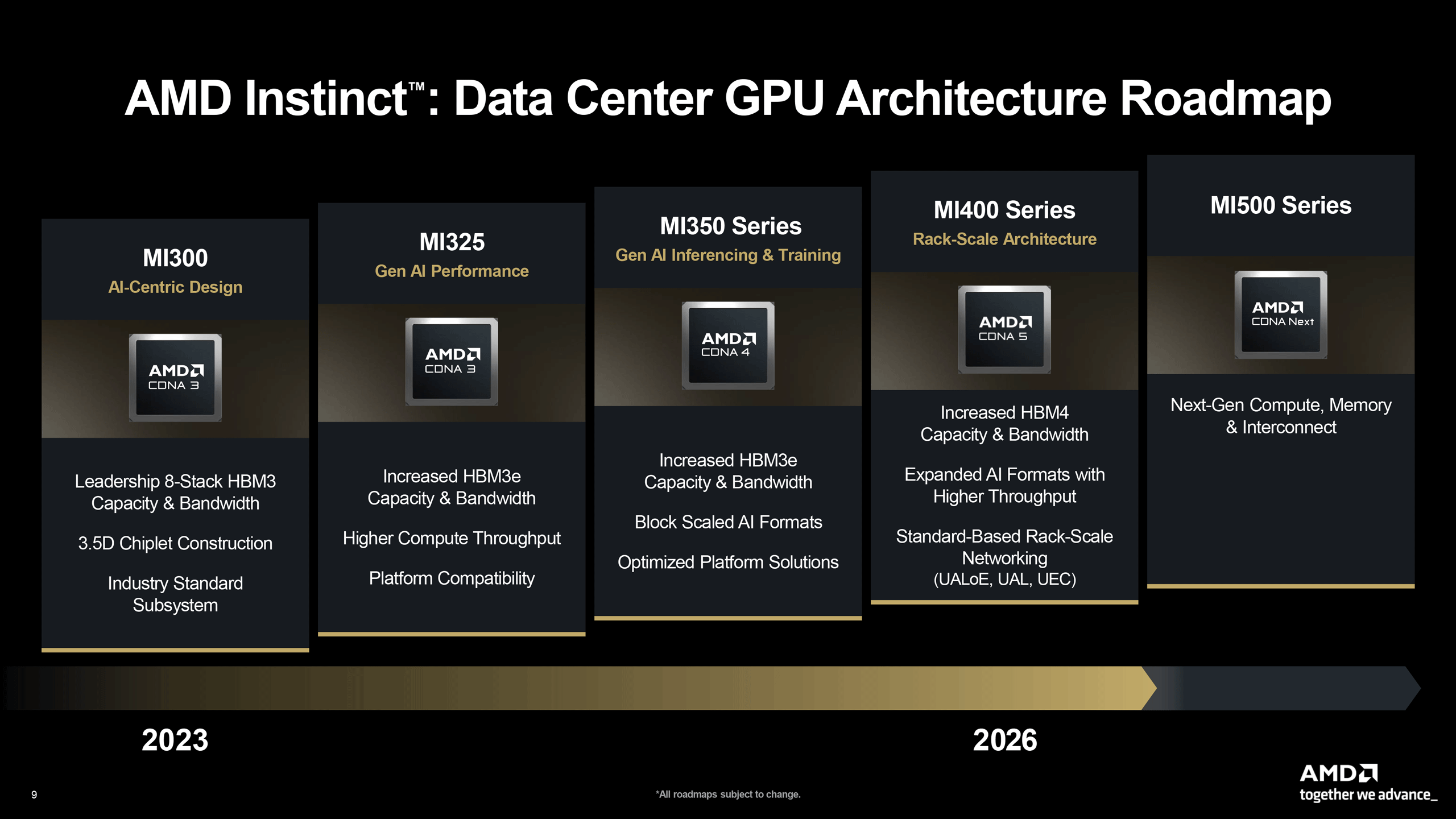

Over the next 24 months, AMD is set to introduce the Zen 6-based EPYC Venice CPU with up to 256 cores in 2026, EPYC Verano processor in 2027, the Instinct MI400-series AI and HPC accelerators in 2026, which will power the Helios rack-scale solution for AI. It will also release the Instinct MI500-series AI and HPC GPUs in 2027, which will serve as the base for the company's next-generation rack-scale AI system.

Article continues belowLaunching around half a dozen major product designs, including several specialized CPUs and two rack-scale solutions, is a big deal. So let's go over AMD's data center roadmap for 2026 and 2027.

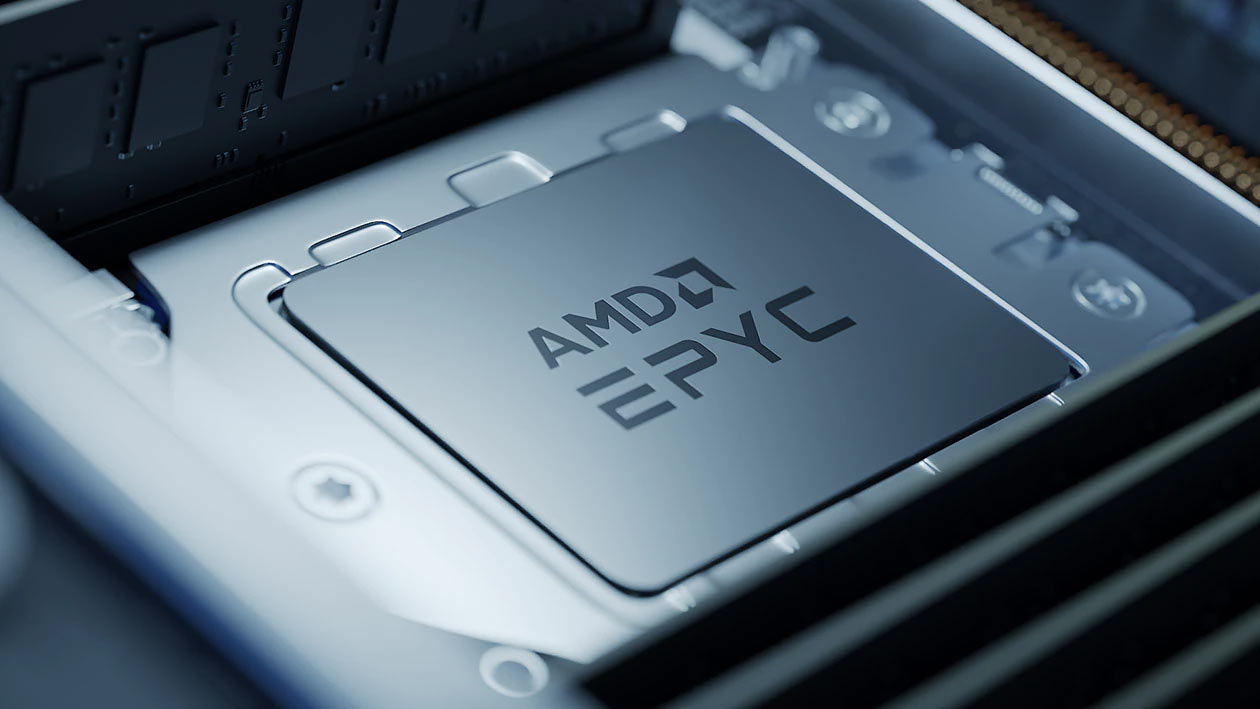

2026 CPUs: Venice and Venice-X — featuring up to 256 Zen 6 cores

AMD's upcoming sixth-gen EPYC Venice processor will rely on the company's next-generation Zen 6 microarchitecture and will also be the firm's first data center design to be made on TSMC's N2 (2nm-class) process technology. The chip will scale up to 256 high-performance Zen 6 cores, a 33% increase over the current EPYC Turin processors that feature up to 192 Zen 5c (compact cores).

AMD's next-gen EPYC CPUs are expected to adopt the all-new SP7 form factor, which can accommodate more compute complex dies (CCDs) on the package, increase the number of memory channels, enhance I/O capabilities, and boost peak power delivery to feed extra cores.

In addition to the increased core count, another key improvement will be a major boost in memory bandwidth; EPYC Venice will provide up to 1.6 TB/s of per-socket memory throughput, more than doubling the 614 GB/s available on current EPYC CPUs. While AMD has not detailed the mechanism behind this increase, it is likely to involve support for advanced memory modules such as MR-DIMM or MCR-DIMM, which are becoming the default choice for CPUs with large core counts that are starved for sufficient memory bandwidth.

The processor will also significantly enhance connectivity with accelerators, since AMD plans to double CPU-to-GPU bandwidth, likely by adopting PCIe 6.0, which can deliver roughly 128 GB/s of bidirectional bandwidth per link before encoding overhead. With as many as 128 PCIe lanes, Venice should be capable of moving substantially larger volumes of data between CPUs and accelerators such as AMD's upcoming Instinct MI400-series GPUs, which will be particularly handy for AMD's rack-scale AI solutions.

AMD claims the EPYC Venice processor will deliver up to 70% higher performance compared with the existing EPYC 9005-series, although the company did not specify the workloads used for this comparison, so take the claim with a pinch of salt.

Although AMD has so far not released fifth-gen EPYC Turin processors with 3D V-Cache aimed at AI and HPC workloads that demand massive single-thread performance, the company clearly teased EPYC Venice-X with extra L3 cache as being a part of its sovereign AI and HPC platforms featuring the Instinct MI430X and MI440 accelerators.

Whether AMD plans to offer specialized versions of its EPYC Venice processors based on the Zen 6 microarchitecture for space and power-constrained deployments, such as edge and network solutions, remains to be seen. It's certainly a possibility given that there are no Zen 5-based offerings for these segments.

2027 CPUs: Verano — A brand-new design, or Zen 6 with BSPDN?

While we can only wonder about AMD's plans for specialized versions of sixth-gen EPYC processors, we do not know that AMD intends to release its EPYC Verano high-performance processors in 2027 to power its next-generation rack-scale AI system.

AMD does not officially name its codenamed Verano CPUs as the seventh-gen EPYC offering, so we have no idea whether these are based on the Zen 7 microarchitecture or Zen 6 (it may be a Zen 6+ version), featuring tangible enhancements. What those enhancements might be is anyone's guess, as AMD has not disclosed any official details.

The planned introduction of Verano processors in 2027 coincides with the ramp of TSMC's A16 fabrication process, which is expected to enter high-volume production in late 2026. A16 will be the foundry's first manufacturing node to incorporate backside power delivery, a feature designed to improve power distribution and efficiency, which will be particularly beneficial for large datacenter CPUs and AI accelerators.

AMD has not confirmed which process technology will underpin its 2027 server processors and GPUs. But given the timing of the roadmap and the advantages of backside power delivery for high-current compute silicon, it would not be surprising if AMD's 2027 data center products are built on A16. In the end, by re-architecting the power delivery of Zen 6 CPUs for servers, AMD would get a 'free' performance upgrade and gain valuable experience building processors with a backside power delivery network (BSPDN) — all without moving to an all-new microarchitecture, which would greatly simplify debugging.

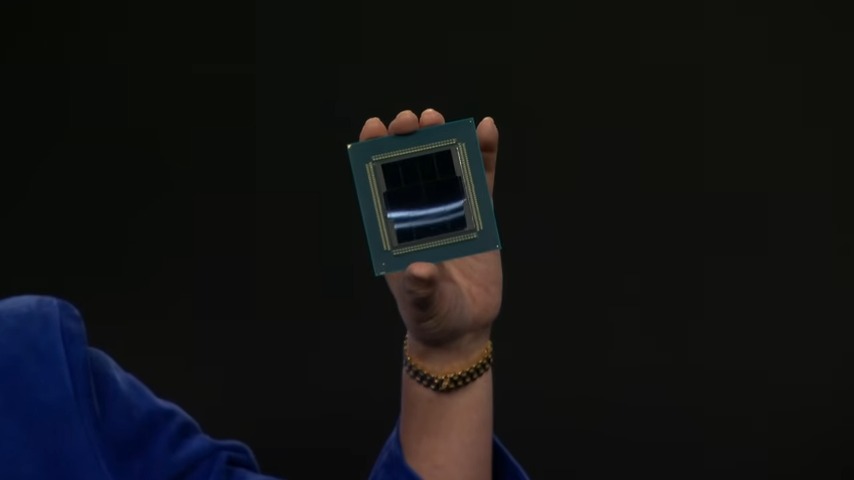

2026 AI Accelerators: Instinct MI400 series — different subsets of CDNA 5

Starting with the Instinct MI400-series data center GPUs, AMD will offer three distinct products based on different subsets of the CDNA 5 architecture, each aimed at different types of workloads and deployments. By tailoring each accelerator to a specific workload, AMD can simplify execution units in AI GPUs, improve power efficiency, maximize performance, and even lower manufacturing costs, albeit by investing in an additional tapeout on TSMC's 2nm tapeout, which is costly.

The Instinct MI440X and MI455X models are designed primarily for low-precision AI computation, including such data formats as FP4, FP8, and BF16. AMD plans to use the MI440X as the foundation of its Enterprise AI platform, which uses AI servers based on one EPYC Venice CPUs (using the Zen 6 architecture) and eight Instinct MI440X accelerators. These machines are designed for on-premises AI deployments for training and inference, and feature power consumption and cooling requirements that are compatible with existing data center infrastructure.

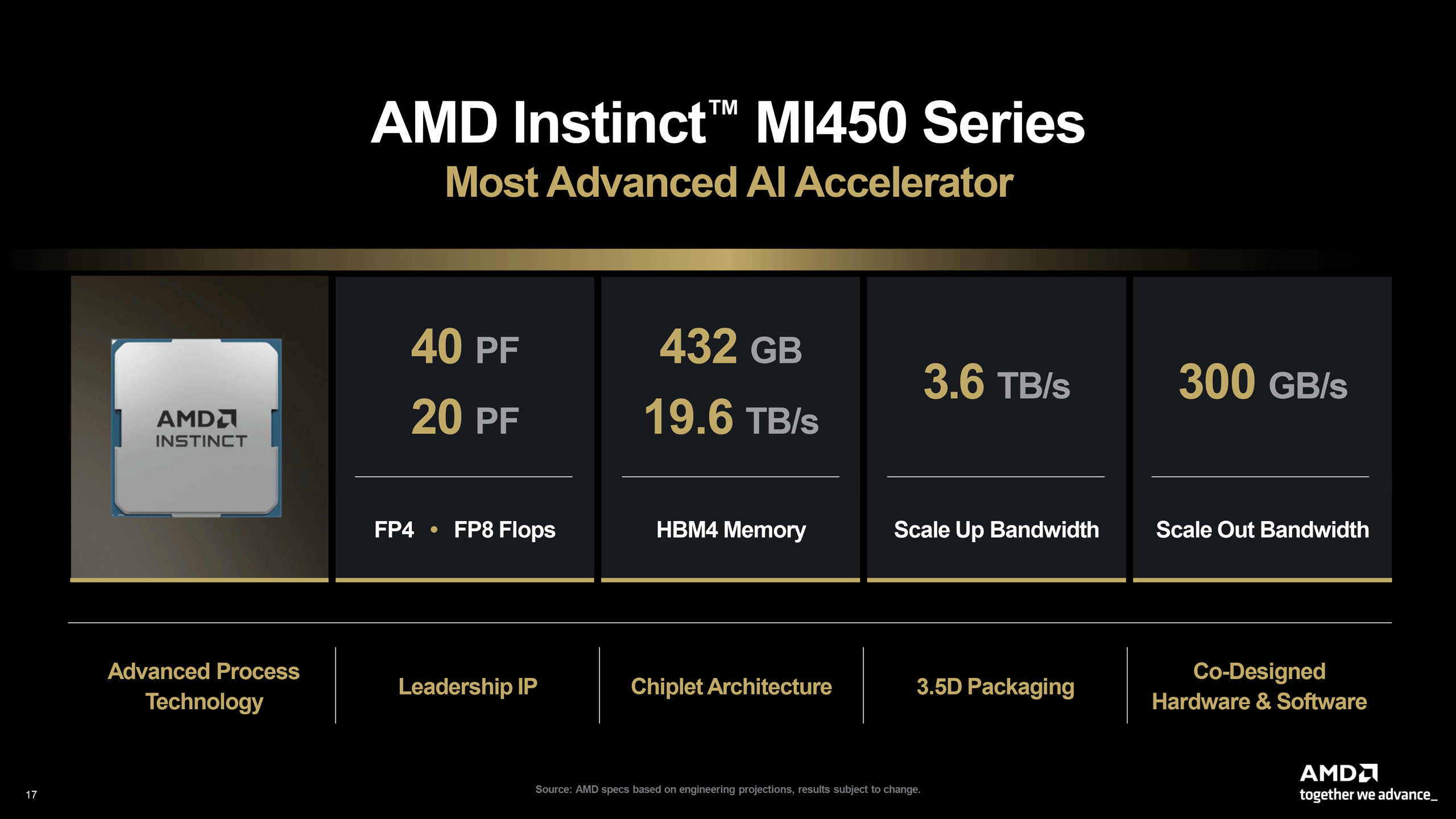

The Instinct MI455X will be AMD's flagship AI offering that is meant to deliver the absolute maximum performance when installed into AMD's Helios rack-scale systems, which will be liquid-cooled. AMD once projected its Instinct MI455X to offer 2X performance (without disclosing the data format), increase memory capacity by 50%, and grow bandwidth by more than 100% compared to the Instinct MI355X. Meanwhile, the MI455X is set to hit 40 dense FP4 PFLOPS, which is slightly lower than Nvidia's Rubin GPU, which is expected to offer 50 dense FP4 PFLOPS.

By contrast, the Instinct MI430X targets sovereign AI and HPC environments and therefore supports both FP32 and FP64 precision, required for traditional technical computing and supercomputing workloads. AMD envisions that customers deploying MI430X accelerators will also use EPYC Venice-X processors that add extra cache and offer enhanced single-thread performance.

AMD plans to use Infinity Fabric for intra-package connectivity on its MI430X, MI440X, and MI455X accelerators, which is not surprising. For scale up connectivity, AMD expects to deploy UALink, the industry's first standard interconnection for AI accelerators. Broad deployment of UALink will depend on ecosystem support from companies such as Astera Labs, Auradine, Enfabrica, and Xconn, however. Based on recent rumors, AMD is looking at UALink-over-Ethernet connectivity.

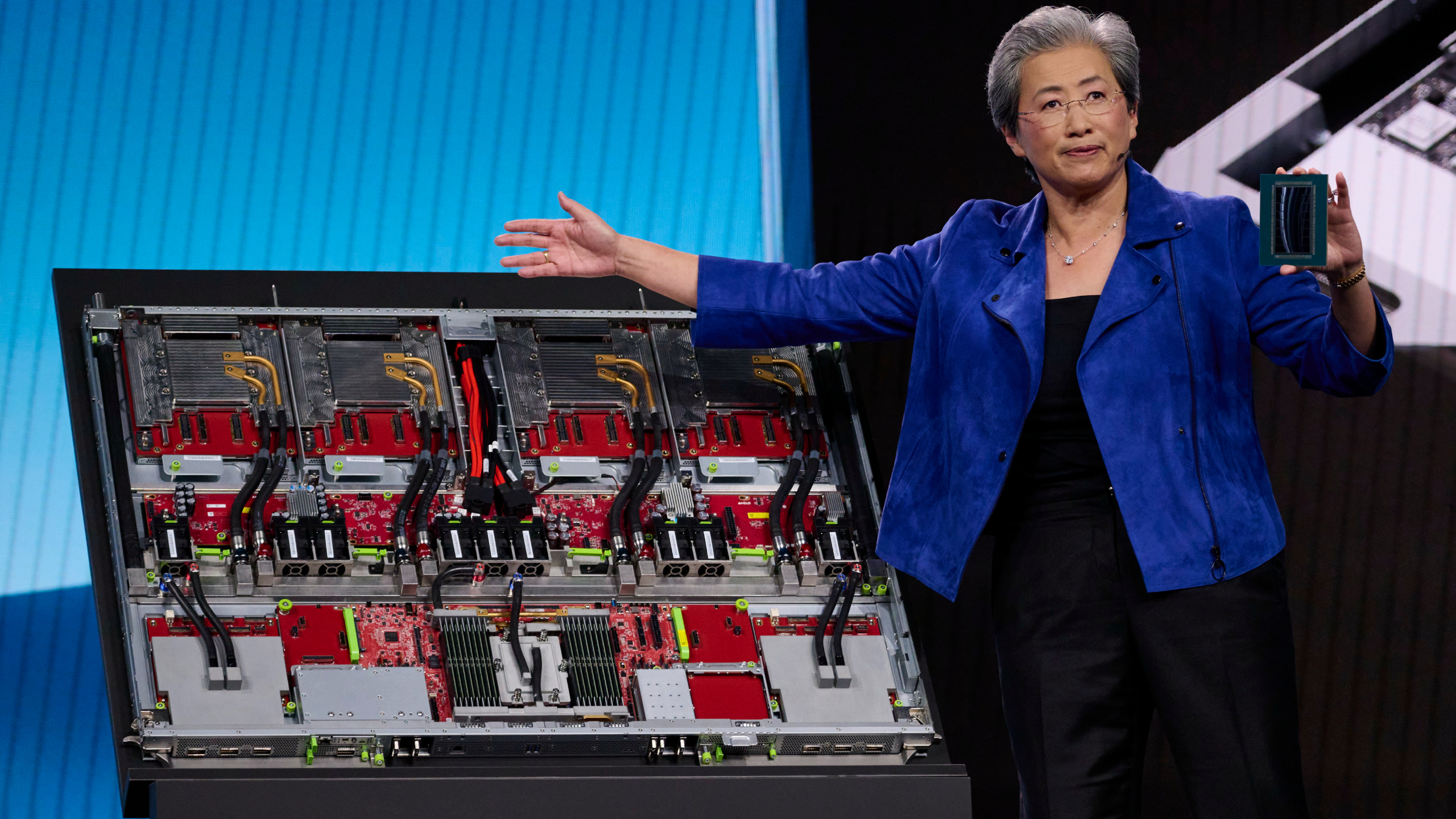

2026 / 2027 rack scale platform: Helios — AMD's first rack-scale AI system

AMD's Instinct MI455X and UALink are two key ingredients of Helios, the company's first rack-scale AI system. This machine will use EPYC Venice CPUs, pack 72 Instinct MI455X accelerators (interconnected using UALink or UALink-over-Ethernet), offer 31 TB of HBM4 memory with 1400 TB/s of bandwidth, and drive 2900 FP4 dense PFLOPS. The unit will be behind Nvidia's VR200 NVL72 system in terms of compute performance, but as it will feature more HBM4 memory capacity, it may have an edge over its rival in memory-dependent workloads. In addition, Helios will use Pensando Vulcano network interface cards (NICs), which are expected to be one of the industry's first 800 GbE network cards that comply with the Ultra Ethernet specification.

Recent rumors indicated that AMD may delay widespread availability of its Instinct MI455X accelerators and Helios rack-scale systems from the second half of 2026 to Q2 2027 — reports AMD was quick to deny. But uncertainties surrounding the broad availability of UALink switches in calendar 2026 clearly impact the sentiment about AMD's Helios and rack-scale solutions relying on the industry-standard interconnection in general. Then again, considering that AMD has signed multi-billion-dollar deals with AI companies to supply them with its future AI systems, it is possible that the first batches of rack-scale MI455X UALoE72 systems will be exclusively available to companies like Meta and OpenAI, whereas other clients will get them sometime in 2027.

2027 AI Accelerators: Instinct MI500 series — CDNA 6 and 256-way MegaPod

Although all eyes are on AMD's Instinct MI400-series and Helios, right now the company has an even more impressive AI solution in the pipeline: Instinct MI500-series accelerators based on the CDNA 6 architecture. The next-generation AI rack is rumored to pack 64 EPYC Verano CPUs and 256 Instinct MI500-series GPU packages.

The system architecture — tentatively called Instinct MI500 UAL256 — spans three interconnected racks. The two outer racks each contain 32 compute trays that integrate one EPYC Verano processor and four Instinct MI500 accelerators. The central rack hosts 18 trays dedicated to UALink switches that link the cluster together. In total, the deployment includes 64 compute trays and 256 GPU modules. Given the power density of modern AI accelerators, the MI500 MegaPod will rely on liquid cooling for both compute trays and networking hardware.

Compared to Nvidia's Kyber VR300 NVL576 pod, which integrates 144 quad-chiplet GPUs, AMD's MI500 UAL256 configuration provides about 78% more GPU packages per system. However, it remains unclear whether the platform can match the expected performance of NVL576, which is projected to deliver 147 TB of HBM4 memory and roughly 14,400 FP4 PFLOPS of compute.

AMD is expected to introduce the system in late 2027, roughly when Nvidia’s VR300 NVL576 Kyber platforms are anticipated to arrive. If this timeline holds, both companies will likely ramp production of their respective MI500-series and Rubin Ultra-based rack-scale systems in 2028.

With an increasing amount of AMD's revenues stemming from data center workloads, the company must remain competitive beyond 2027 if it is to compete with future architectures, such as Nvidia's Feynman architecture. As the AI race continues, AMD's position in the near-term appears to be a solid foundation in ensuring its products are competitive.