Nvidia GTC 2026 keynote live blog — Vera Rubin GPUs and CPUs, DLSS 5, and the 'future of technology'

A lot of AI announcements, and maybe a thing or two for consumers.

Nvidia CEO Jensen Huang is on the stage at the SAP Center in San Jose, California to deliver the GTC 2026 keynote. 3DTested Editor-in-Chief Paul Alcorn and Senior GPU Analyst Jeffrey Kampman are on the ground in San Jose, and we'll be delivering you updates from the keynote floor in real-time. The keynote begins at 11am PT, and it's expected to run two hours.

As with any Nvidia event these days, we expect to hear a lot about AI advancements, as Nvidia continues to run circles around the tech industry in the enterprise space. We could see some announcements on the consumer front, as well, but if you're expecting to see new GeForce graphics cards, you might be leave disappointed.

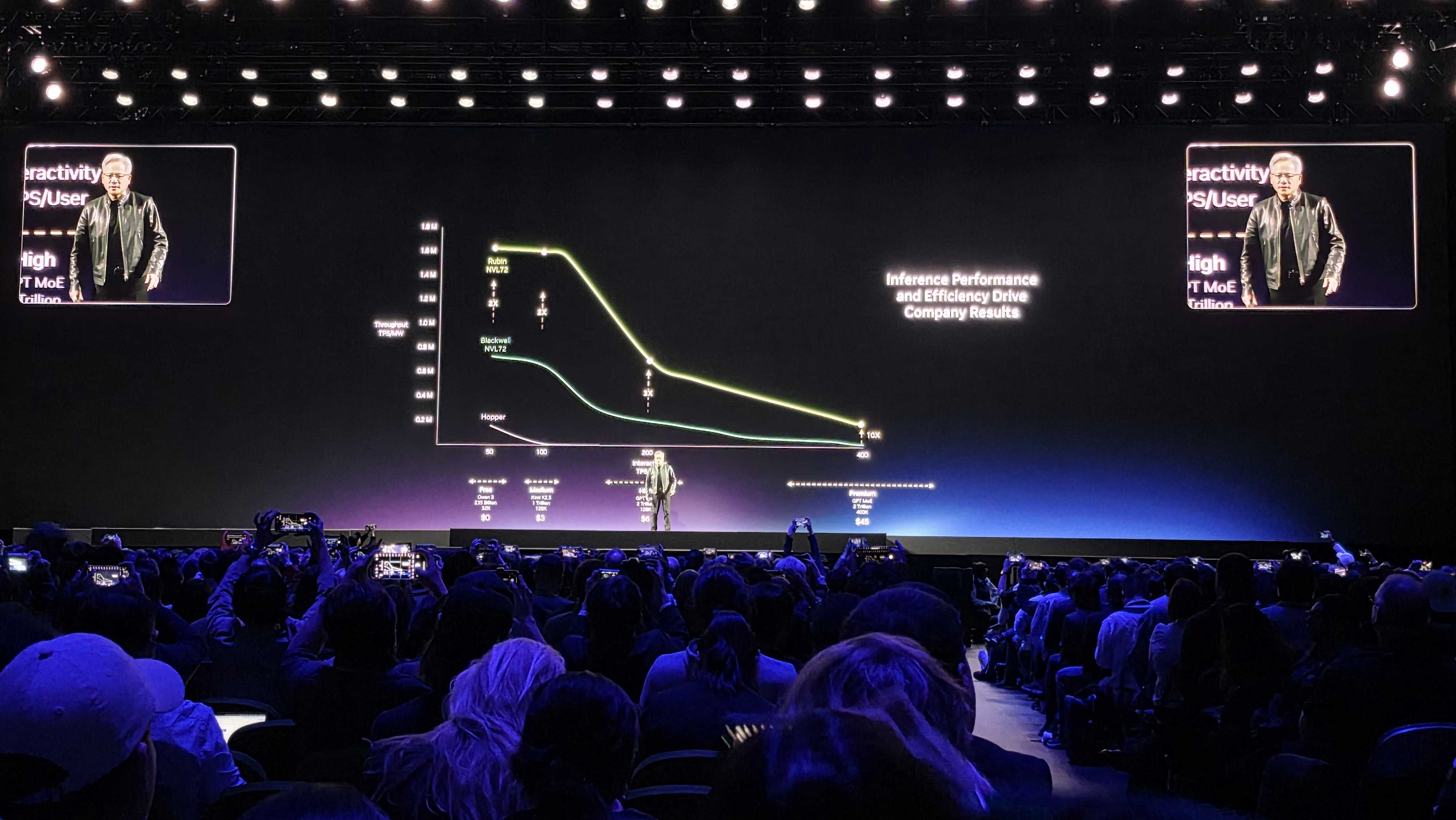

This is 'the most important chart' for companies, says Nvidia

Tokens are "the new commodity," according to Nvidia. For businesses, Nvidia says that the throughput of an AI factory at iso power is something that will be "studied for years." More tokens means smarter models, and the smarter the models get, you need better token throughput. Nvidia says that, at every tier, Vera Rubin delivers much higher throughput.

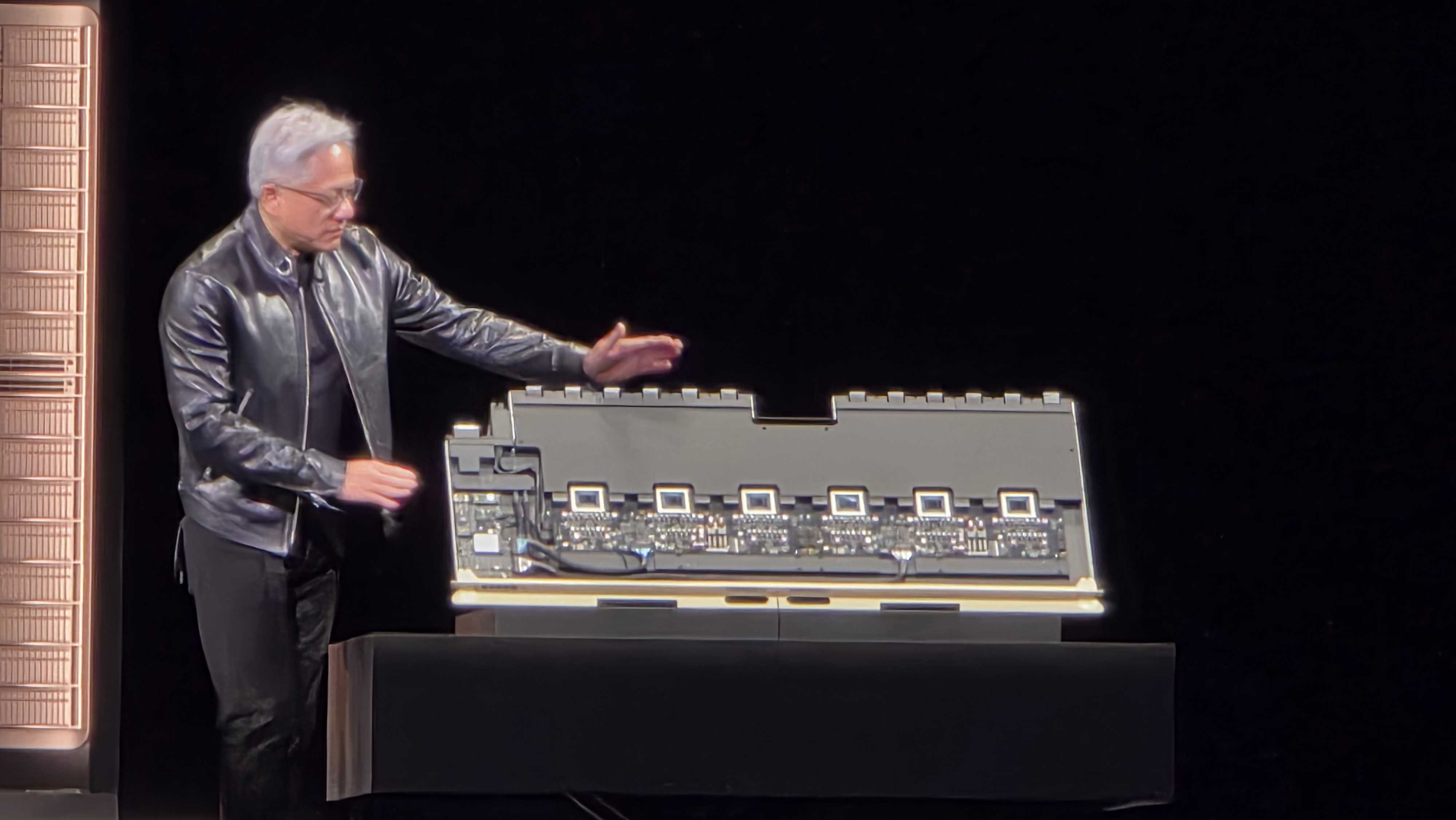

Jensen shows off NVLink for Rubin Ultra

Jensen explains how NVLink for Rubin Ultra works, with compute sitting in the front and the scale-up fabric in the back.

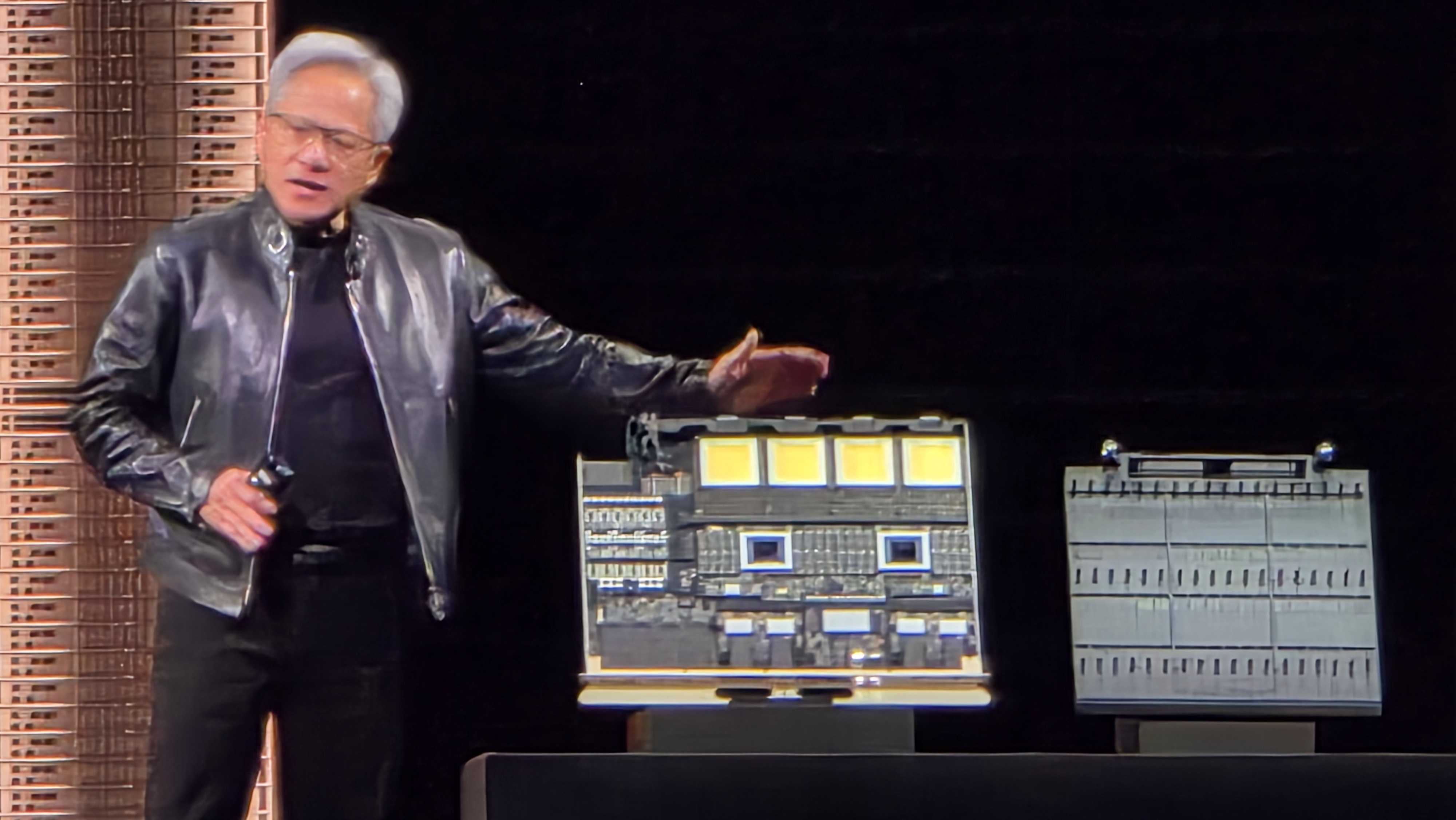

Groq 3 LPU and Groq LPX join the fray

A new addition to the system is a Groq LPX rack, which we learned about ahead of GTC. You can read Jeffrey Kampman's breakdown of Groq 3 in Vera Rubin now.

Learn more about Nvidia's Vera CPU

The 88-core Vera CPU fits into a rack with 256 chips, each of them liquid-cooled. If you want to dig in deep on Nvidia's latest chip that aims to take on AMD and Intel, take a look at our Vera CPU deep dive.

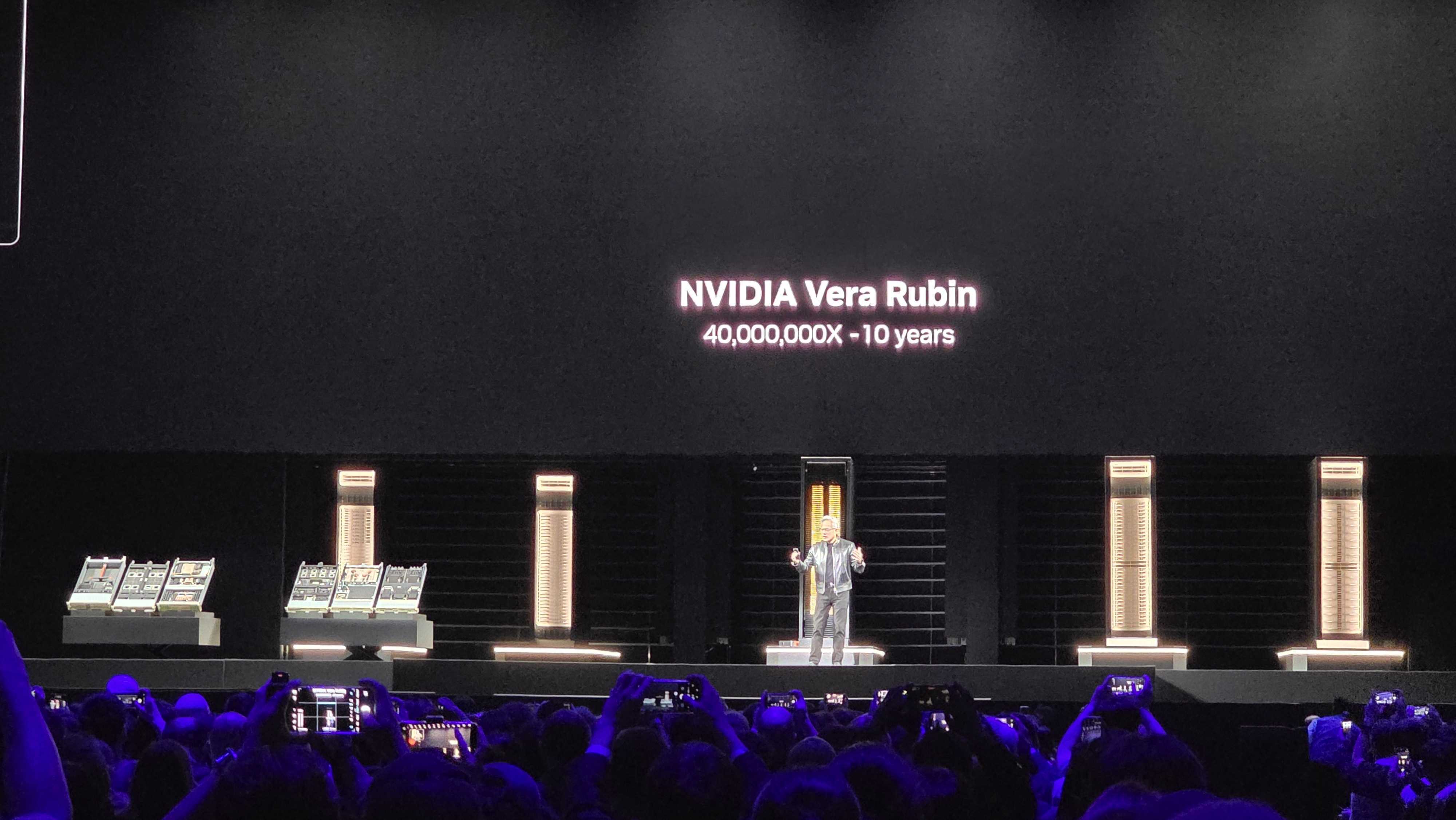

Vera Rubin joins Jensen on stage

Vera Rubin NVL72 is the "engine supercharging the era of agentic AI." A new addition is the Groq 3 LPX tray, and as a whole, Nvidia says it's delivered 40 million times more compute over the past decade. Jensen is showing off Vera Rubin on stage; the whole thing. It's "one giant system."

Jensen says the Vera CPU is designed for high single-threaded performance, and the company built it go along with its racks for agentic processing.

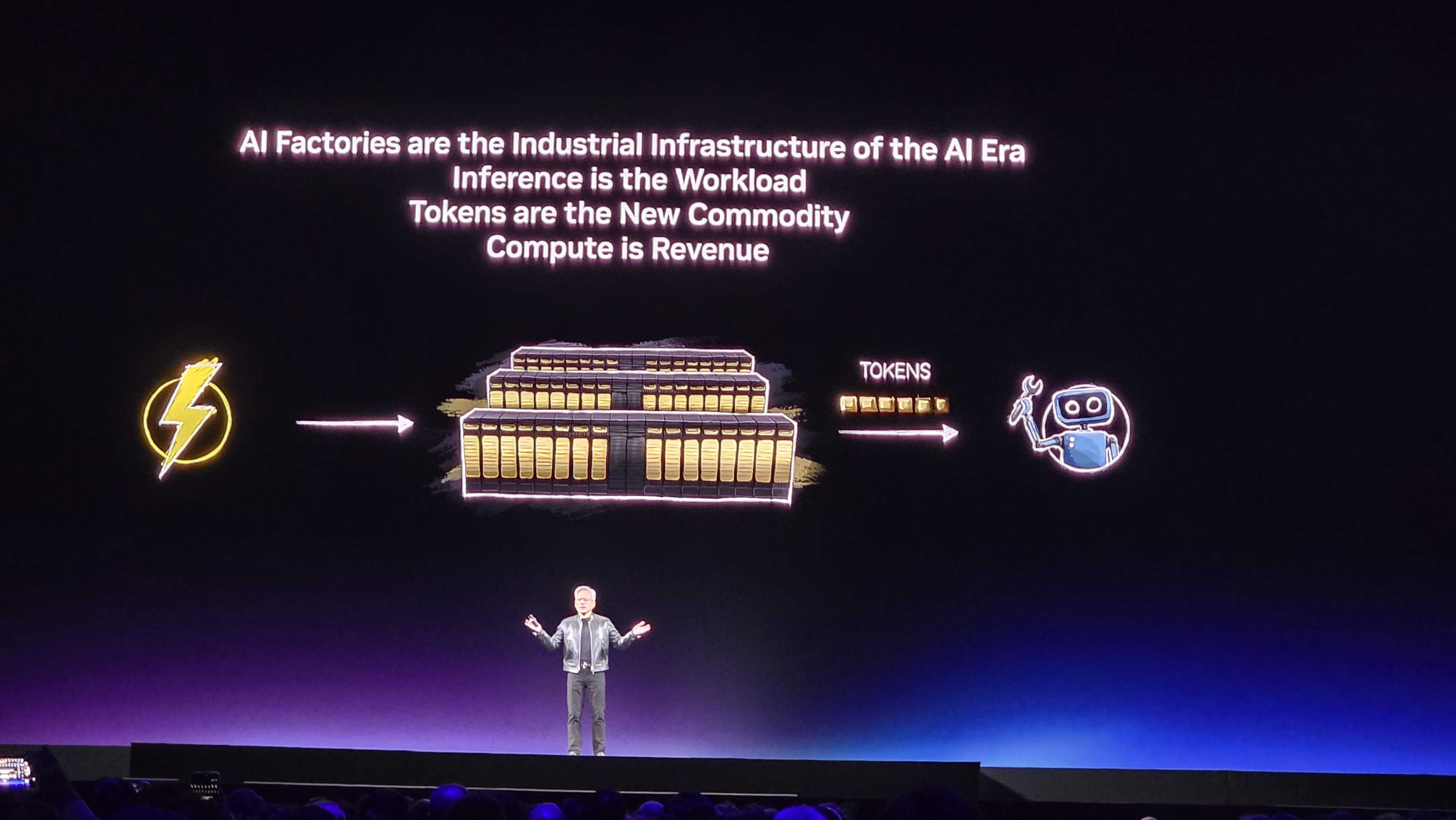

'It's now a factory to generate tokens'

Jensen says data centers used to be a place to store files, and they're now a factory to generate tokens. Inference is the workload and tokens are the new commodity, says Nvidia. Now, onto a short video showing how we got here.

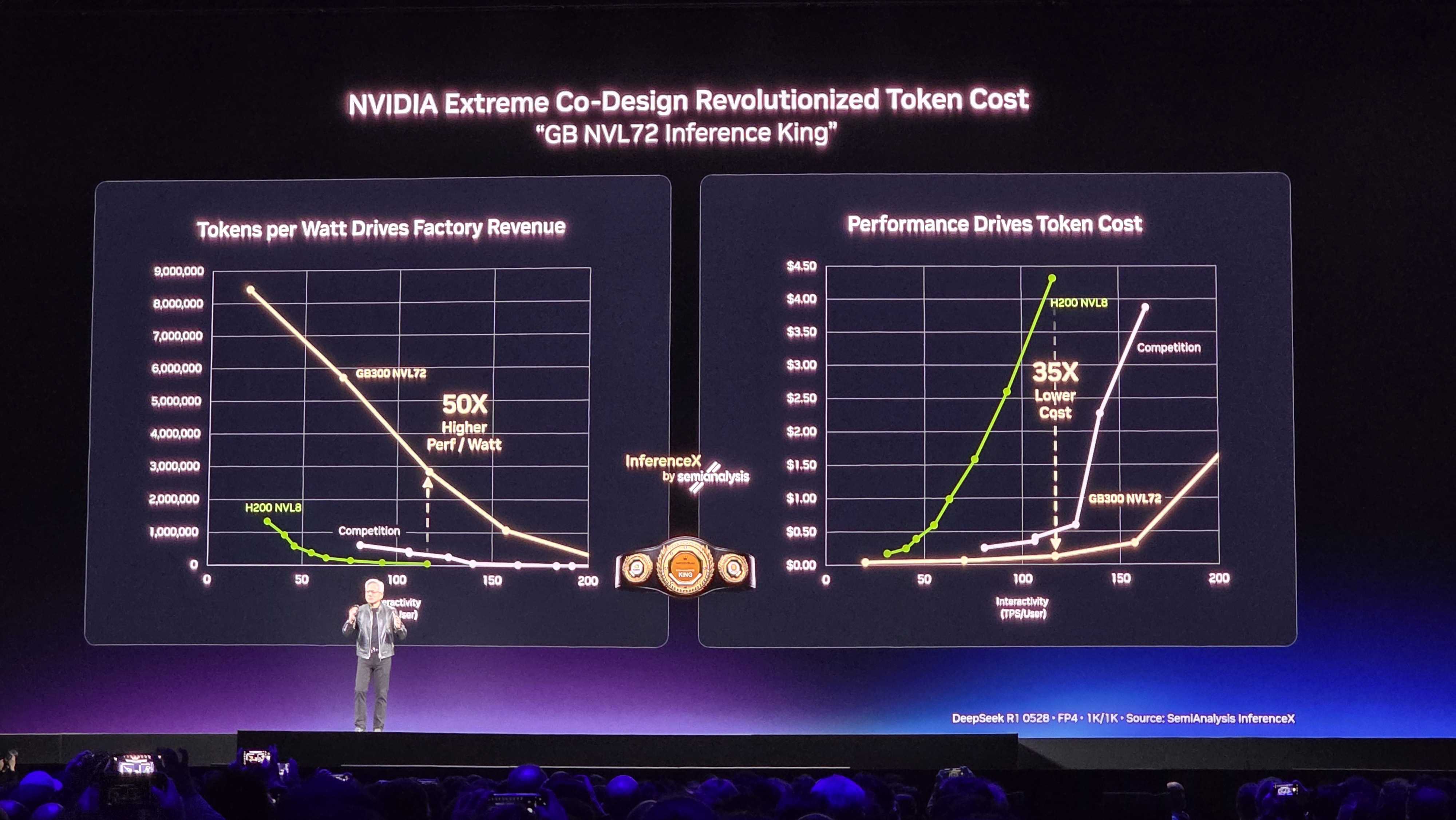

50x performance per watt, 35x lower cost

"Nobody believed me," says Jensen. When Nvidia says it delivered 30x better performance per watt, on NVL72, it was wrong. Apparently, it delivers 50x better performance per watt. Jensen once again goes back to Moore's Law, saying it would maybe deliver 1.5x perf in a year.

Nvidia says Grace Blackwell was 'a giant bet'

Nvidia NVL72 was a "giant bet," says Jensen, and he thanked Nvidia's partners for sticking with the company. "It wasn't easy for anybody... Inference is the ultimate hard." The bet paid off, according to Nvidia, which you can see in the slide above.

Nvidia is the only company that runs every domain of AI across every domain of AI models

Jensen says Nvidia is the only company that runs every domain of AI across every domain of AI models. Between Nvidia, Anthropic, and Meta SL, Jensen says that represents a third of the world's AI compute. Nvidia says it's proven that "you can build with complete confidence" as an AI infrastructure company.

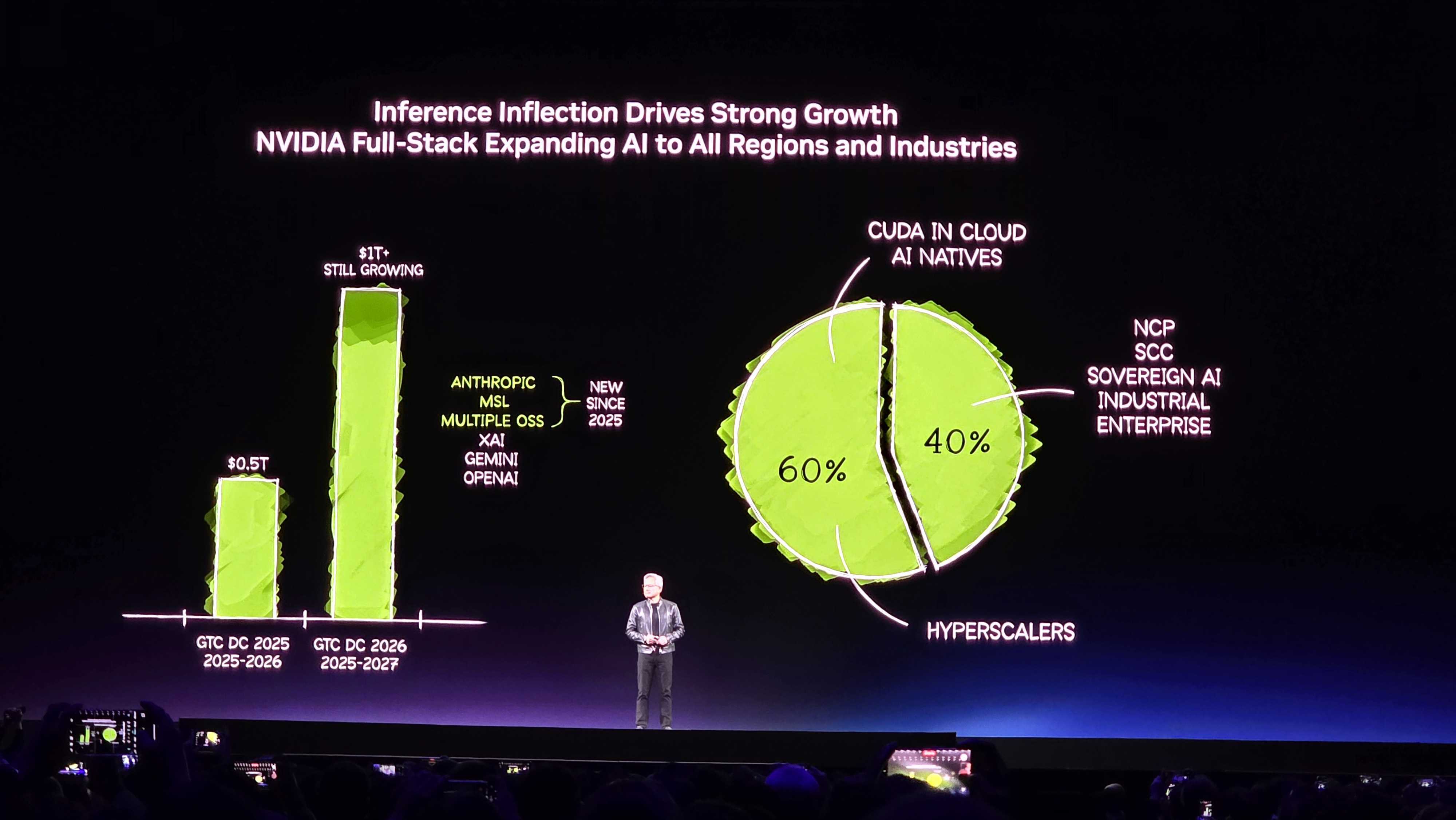

Nvidia says it's going to double demand through the next year

Last year, Nvidia said it saw about $500 billion of high confidence demand and purchase orders for Blackwell and Rubin through 2026. "I see through 2027 at least $1 trillion," says Jensen. "Now, does it make any sense?" Jensen says that's what he's going to spend the rest of the keynote talking about.

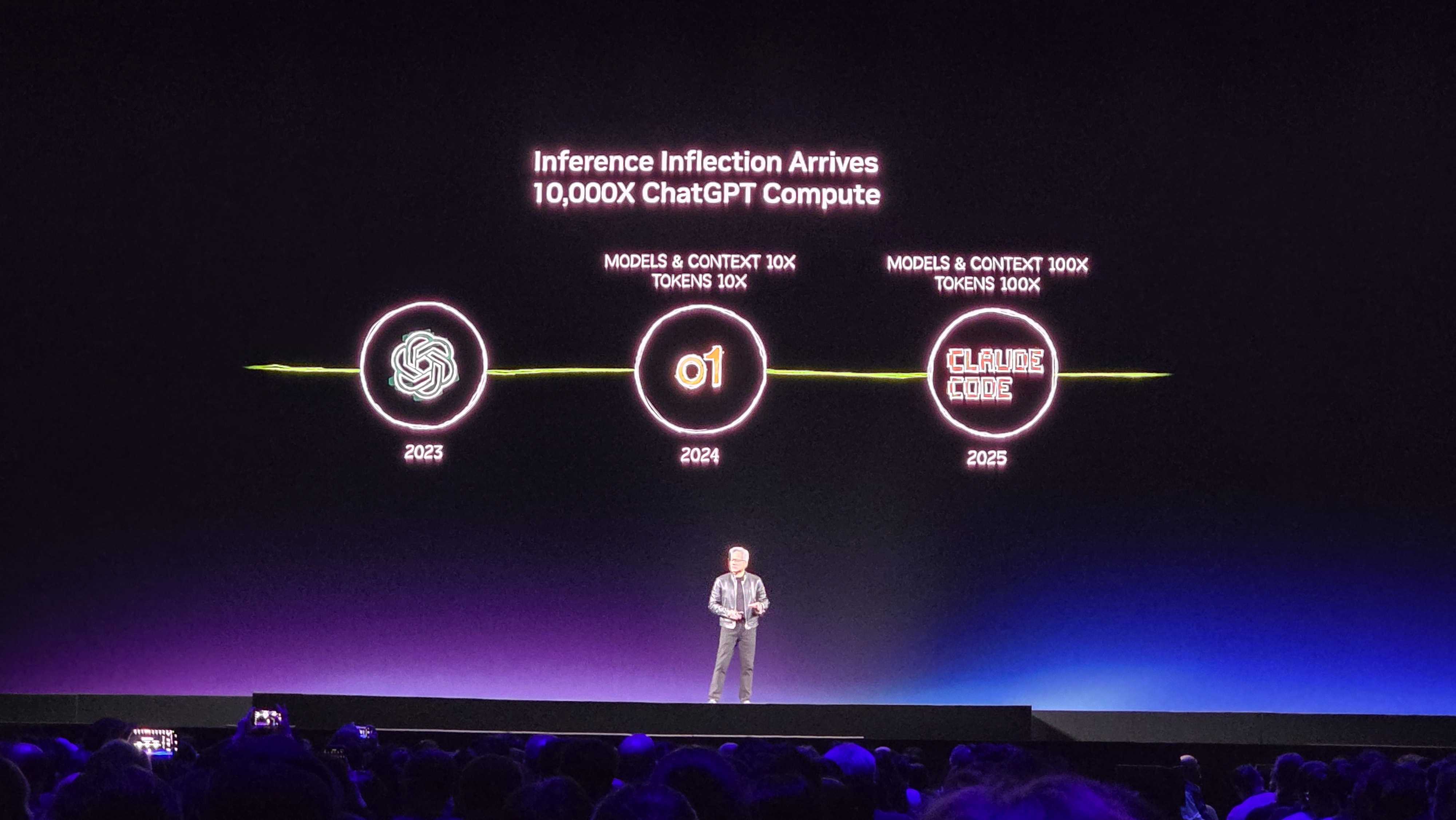

An accelerated timeline of AI

There's been rapid AI development over the past few years. In 2023, it was ChatGPT. In 2024, it was reasoning models like o1, and in 2025, it was huge models with massive context windows like Claude Code. It's the first "agentic model," says Jensen. The executive says 100% of Nvidia is using Claude Code, along with other models. In 2026, Nvidia says we've reached an "inflection point for inference."

Nvidia 'reinvented computing'

Jensen is talking about some of the many "AI native" companies, which are only possible because Nvidia "reinvented computing," says the executive. We're at the beginning of a new platform shift, he says, and it's akin to the PC revolution. This really kicked off over the past two years with ChatGPT kicking off the generative AI era.

cuDNN is what caused the 'big bang' of AI

Nvidia says cuDNN, or CUDA Deep Neural Network, is one of the most important libraries the company has ever made, saying it caused the "big bang" of modern AI. Nvidia is showing a short video about its various CUDA-X libraries, including a life-like video that's entirely simulated.

Bringing it back to CUDA

"We are an algorithm company," says Jensen, after spending 10 minutes talking about the applications of Nvidia's software stack in just about every industry. Everything comes back to Nvidia's CUDA-X libraries, and Jensen describes them as the "crown jewel" of the company.

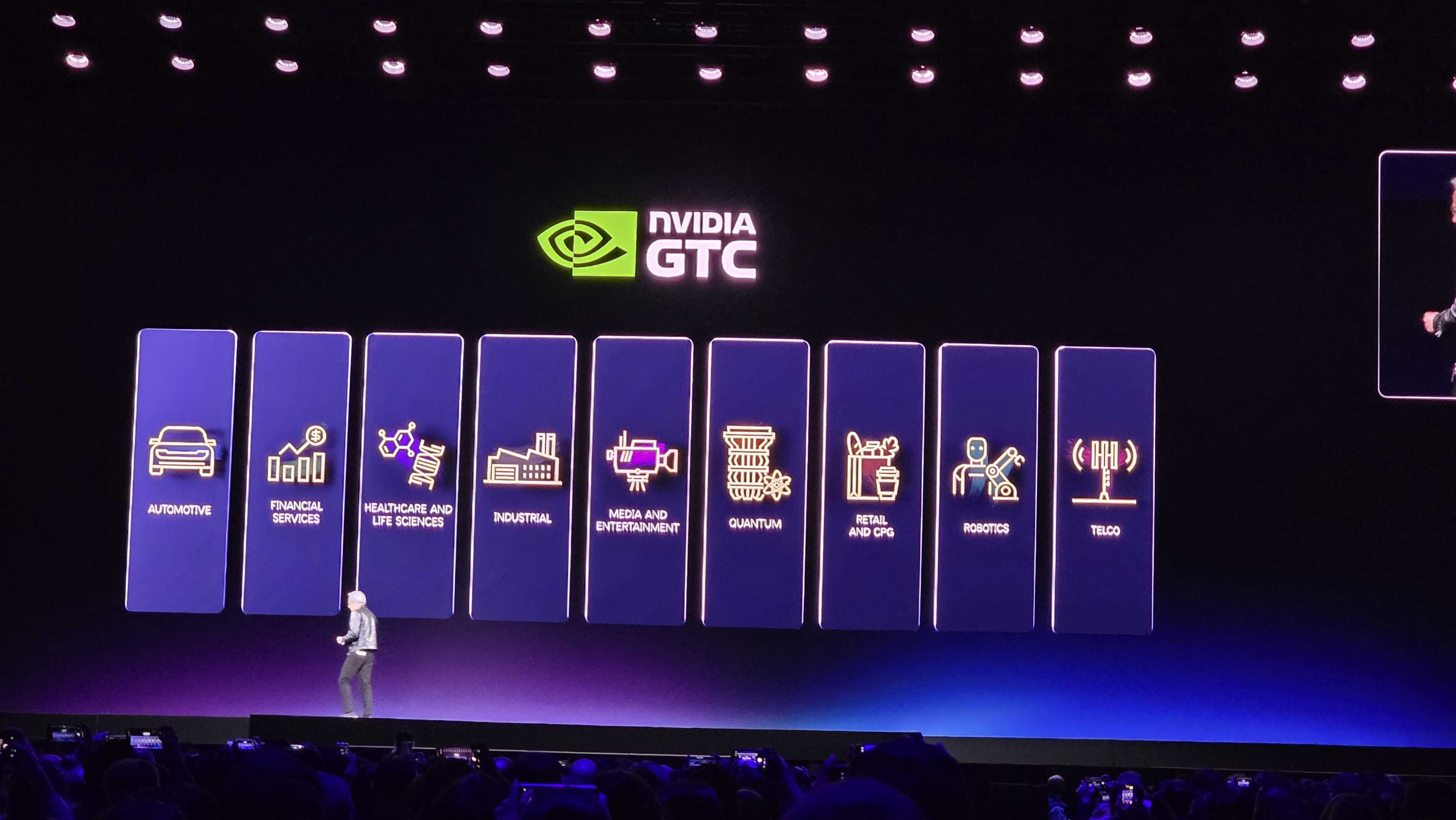

Nvidia says it needs domain-specific libraries to address the needs of different industries

AI has a lot of applications, but Jensen says it isn't as simple as throwing GenAI at the wall and hoping it sticks. "We have to have domain-specific libraries that solve problems in every one of these verticals," he says.

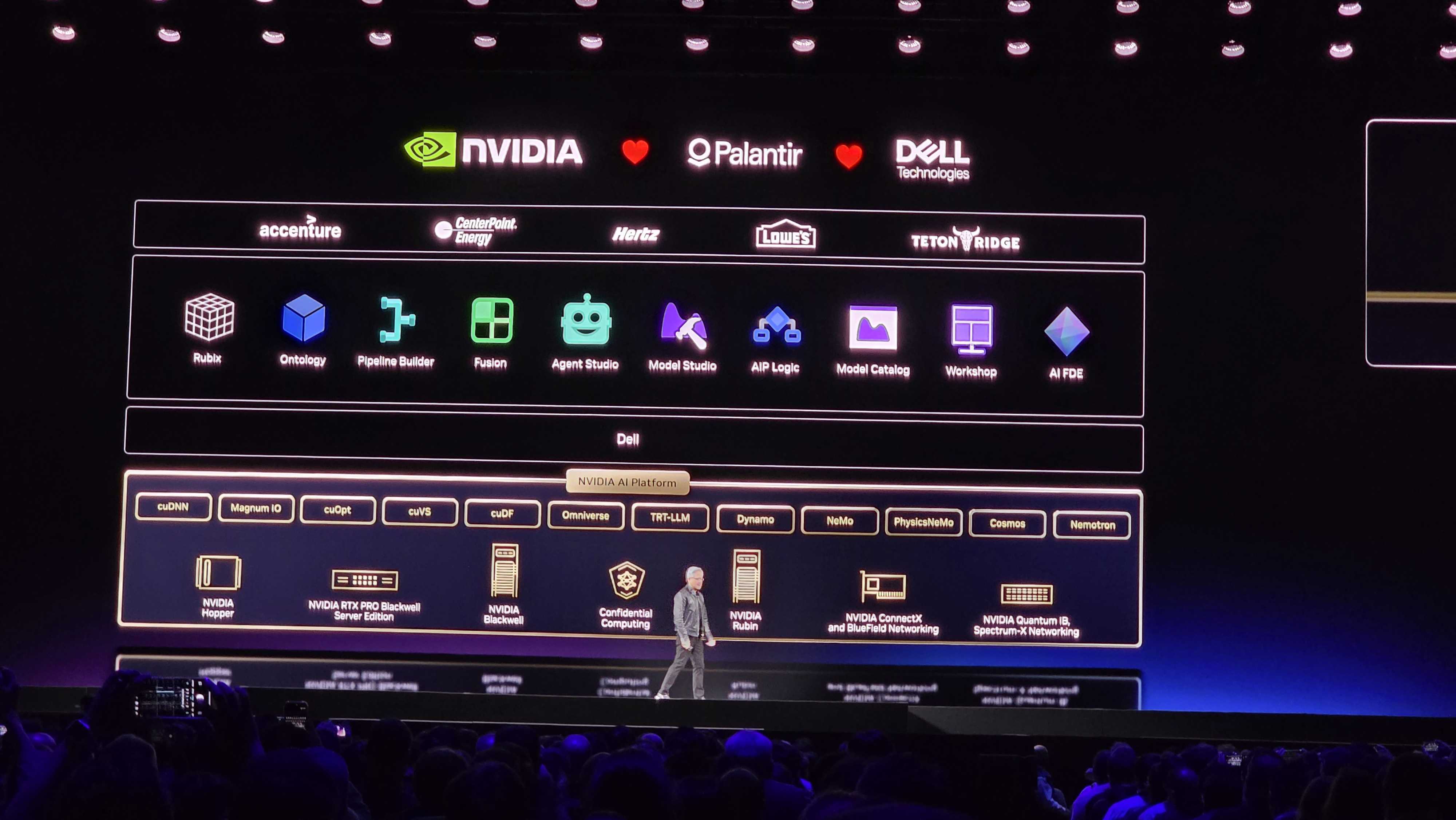

'Vertically integrated but horizontally open'

Jensen describes Nvidia as "vertically integrated but horizontally open," which may or may not raise some eyebrows at the FTC. Regardless, Nvidia says there's "no other way" it can be given what it's trying to do with accelerated computing, delivering the entire stack to customers.

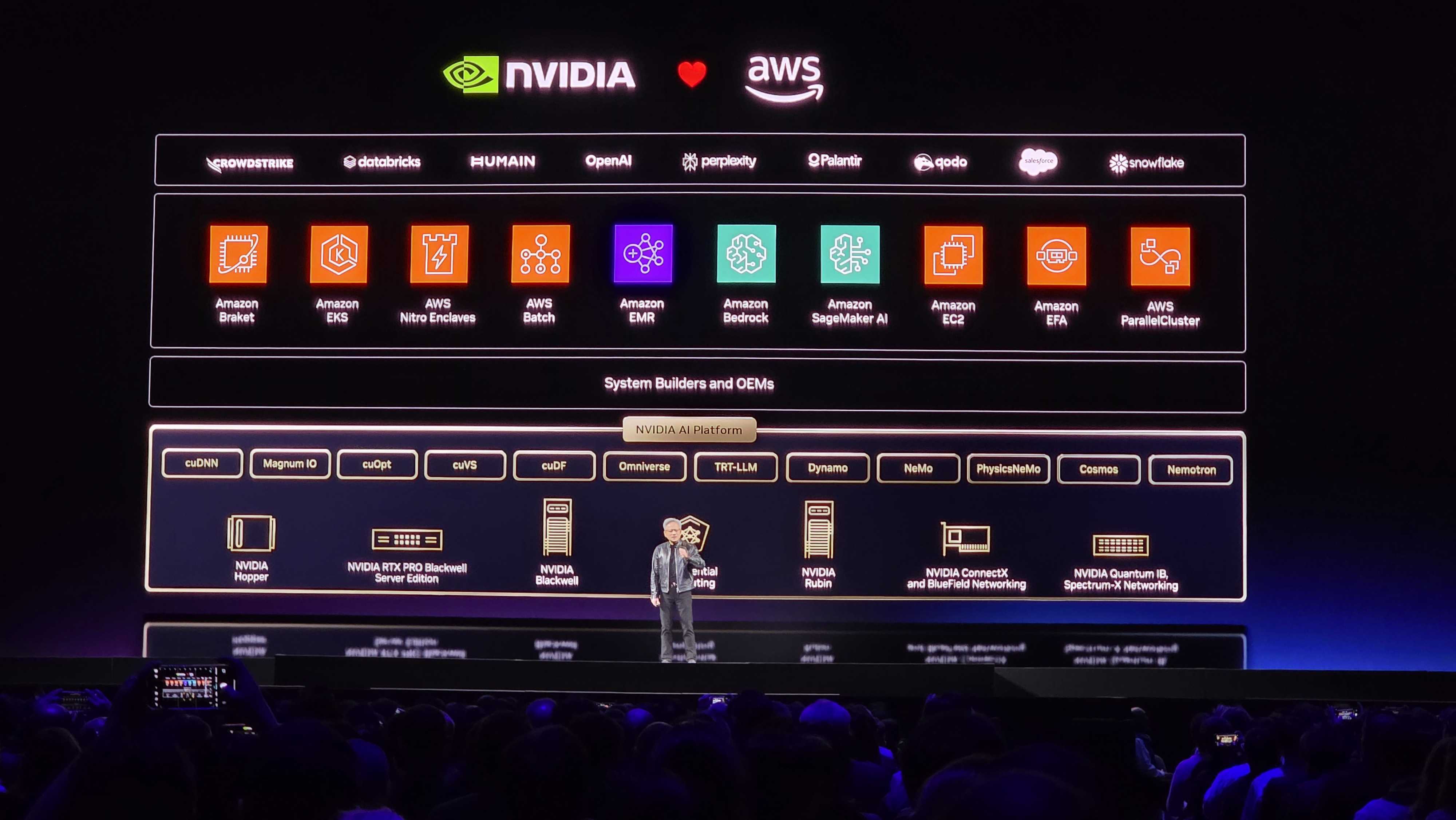

Nvidia is bringing OpenAI to AWS this year

"As you know, [OpenAI] is completely compute-constrained." Jensen says that OpenAI will come to AWS this year, hopefully lightening the load on its massive infrastructure demand.

More Moore's Law talk

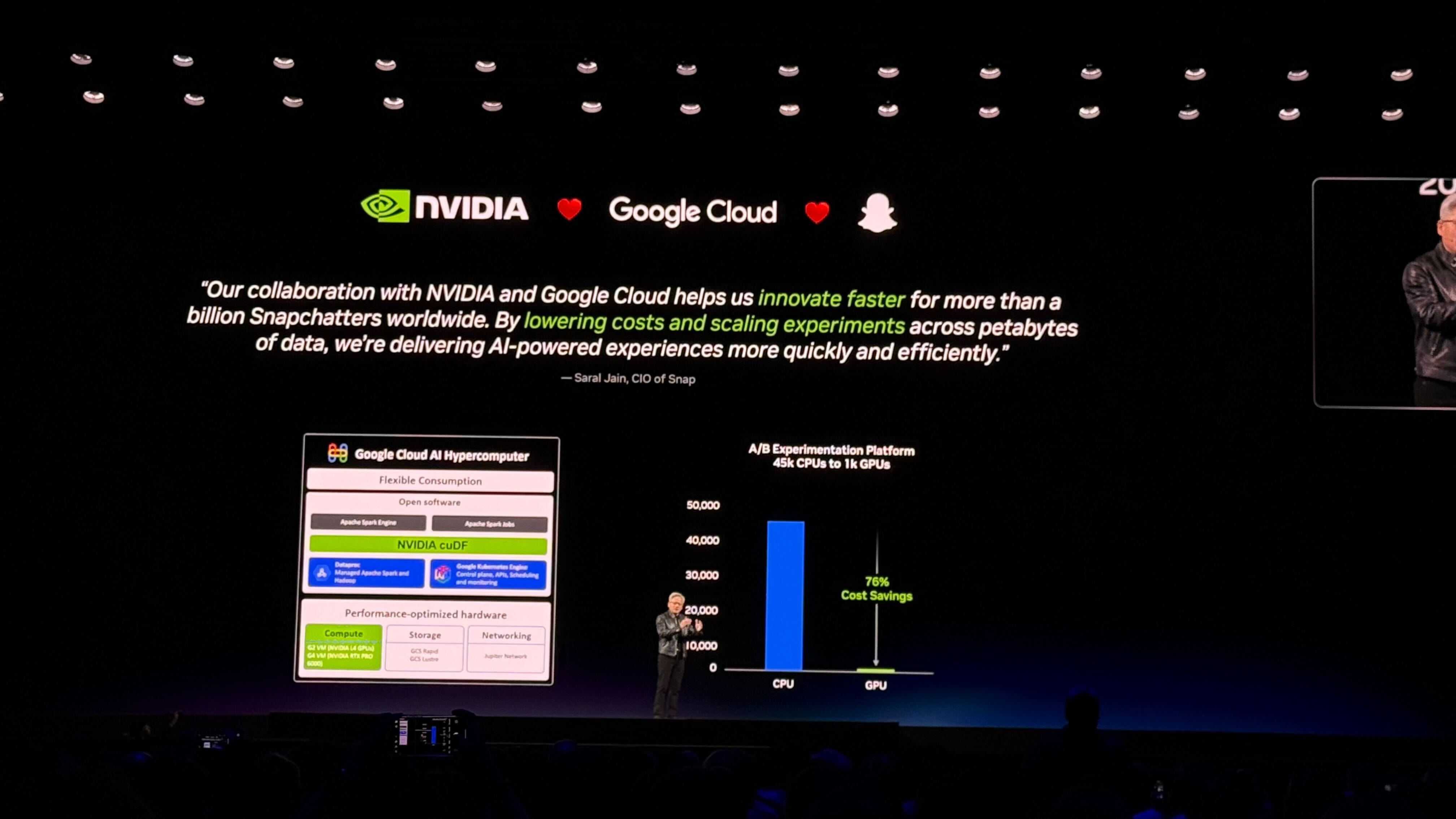

Jensen likes to talk about the death of Moore's Law, and he's doing so once again. "Moore's Law has run out of steam, accelerated computing allows us to take giant leaps forward." Jensen is showing off an example with Google Cloud and showing how Nvidia's acceleration can be repeated across companies and industries.

AI can solve unstructured data, says Jensen

Jensen is describing the importance of AI in unstructured data. He says this data makes up 90% of the world's data but it's been "useless" because you can't search or query it. IBM, the inventor of SQL, is accelerating WatsonX data with the cuDF acceleration framework.

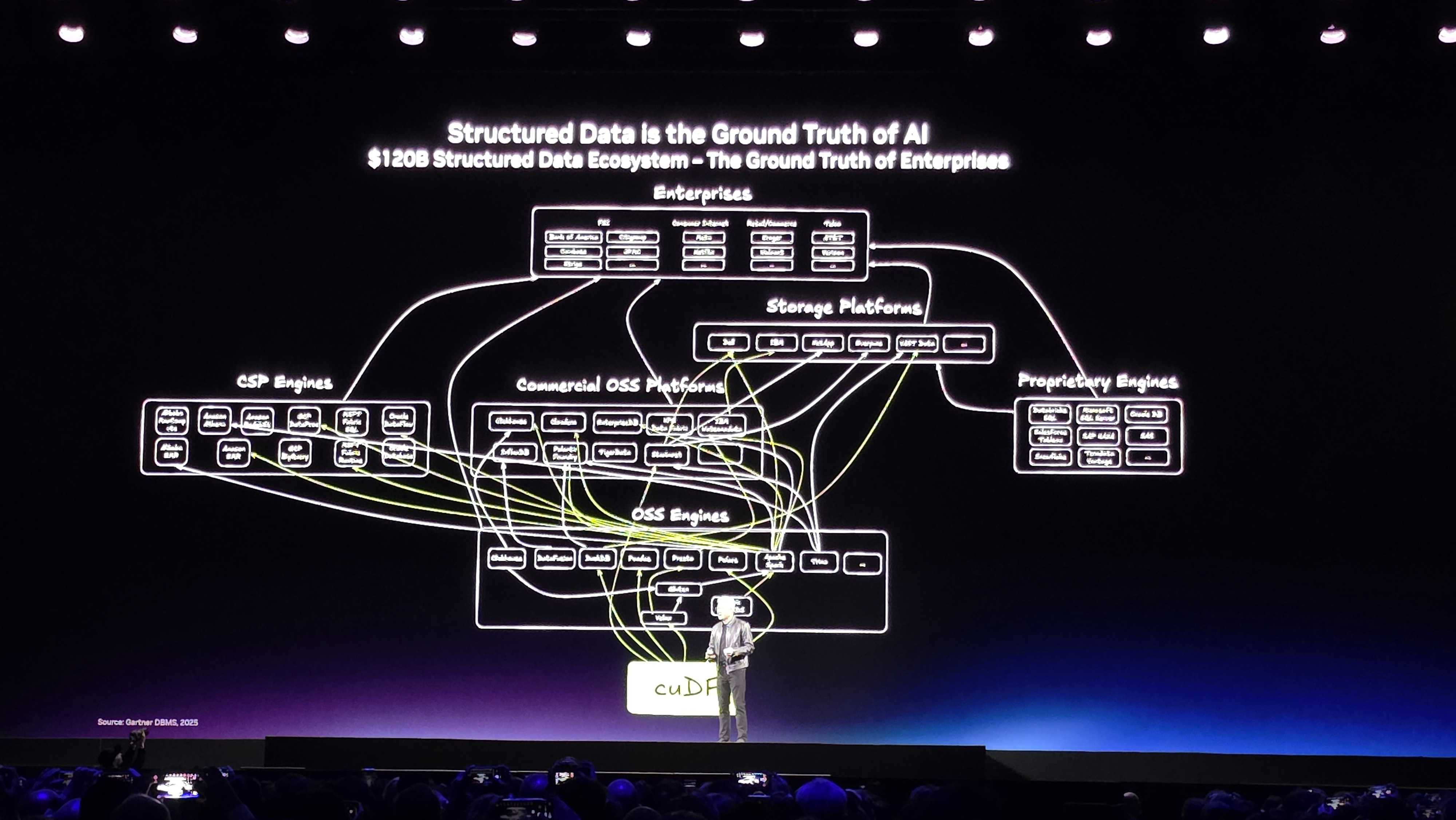

'This is my best slide'

Jensen jokes that he's going to spend the rest of the keynote going through the slide you can see above about structured data. This is "the ground truth" of enterprise computing.

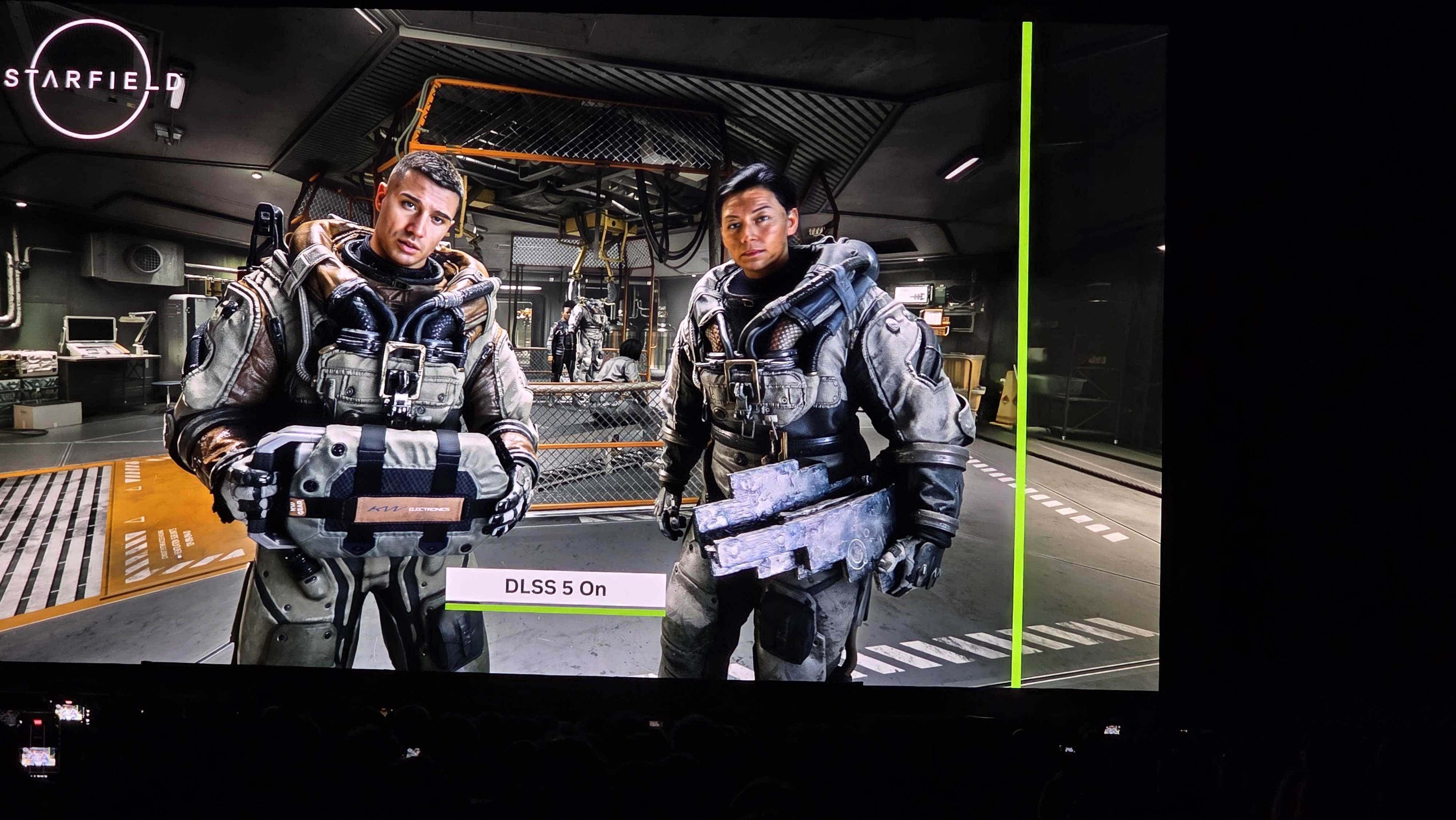

What is DLSS 5?

Nvidia says it combined controllable 3D graphics and structured data with generative worlds. "This concept of fusing structured data with generative AI will repeat itself in one industry after another industry after another industry."

Nvidia is showing off the next generation of computer graphics: DLSS 5

The first announcement is a big one: DLSS 5. Nvidia is showing it off in Resident Evil: Requiem, Hogwarts Legacy, and Starfield. We're all waiting eagerly to hear more details about what DLSS 5 includes, but the side-by-side comparisons are compelling.

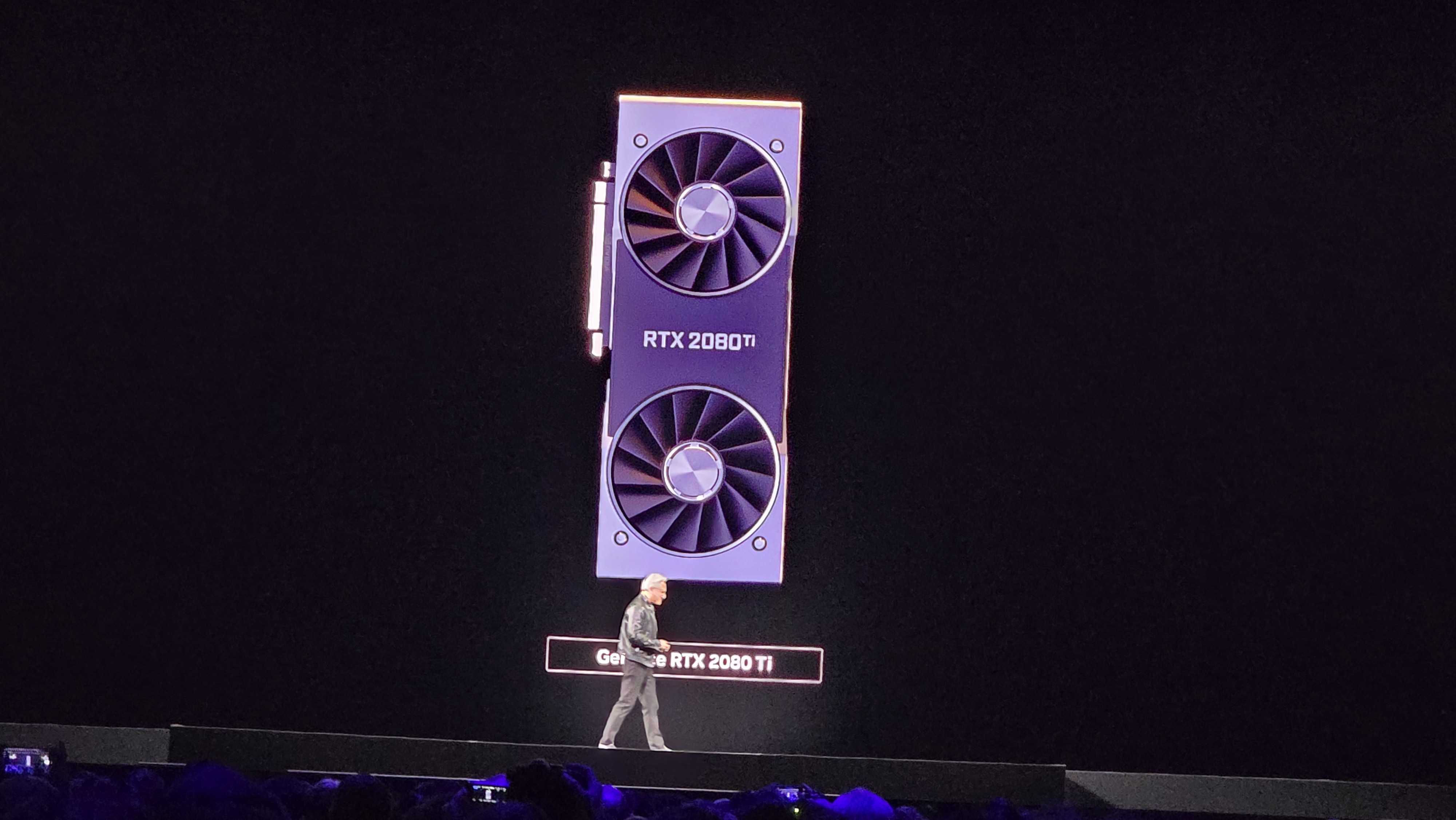

'GeForce is Nvidia's greatest marketing campaign'

Jensen says that "GeForce is Nvidia's greatest marketing campaign." It's an interesting way to frame the conversation, and one that Nvidia has been trying to crack for the past few years. Jensen paints a picture of Nvidia creating the first programmable shader 25 years ago, which eventually led to CUDA, and used GeForce as a vehicle to drive adoption.

Pricing of Ampere in the cloud is going up

The prevalence of CUDA has accelerated what Nvidia calls a "flywheel." Nvidia attracts developers who develop on CUDA, which leads to more people adopting Nvidia hardware, and the lifecycle continues. Because of this, Jensen says the price of GPUs using the now-dated Ampere architecture has actually gone up in the cloud.

'We've been working on CUDA for 20 years'

CUDA is one of the major reasons Nvidia is in the position it's in today, and this GTC marks the 20th anniversary of CUDA. "The single hardest thing is to have built up our install base, we're in every cloud and computer company in every single industry," says Jensen.

The man of the hour is here: CEO Jensen Huang

Jensen Huang has taken the stage in a familiar leather jacket. Sorry folks, there's no special jacket this time around. Jensen is starting off the show thanking some of the people that hosted the preshow leading up to the keynote.

And we're off!

We may have started a few minutes late, but the keynote has officially begun. We begin with a short video about AI tokens and all the wonderful things (according to Nvidia) we've all done with them, from healthcare to space to construction.

That's... A lot of country music?

We're all sitting in surprise here at 3DTested at the amount of country music playing before Jensen takes the stage. We're nearly a quarter past the top of the hour at this point and still waiting for the keynote to start. There's nothing wrong with country music, but rustic Americana and enterprise AI isn't a combo I'd normally expect.

Running a bit behind schedule

We're a few minutes past the top of the hour, and we're still waiting on the keynote to start. In the meantime, a quick reminder that you can watch along with us through the live stream above.

T-Minus 5 Minutes

Run to the bathroom, get your drink ready, and settle in. We're just a few minutes away from the start of GTC 2026. Jensen will probably start with a short history of Nvidia's role in AI, but we expect the announcements to rapid-fire out after that point. We're sat down in the SAP Center in San Jose and ready to dig in.

What to expect from GTC 2026

There's always room for surprises, especially at Nvidia's own GTC event, but there are a few key announcements we're focused in on:

- Intel x Nvidia partnership — Nvidia bought $5 billion in Intel stock last year, and at the time, announced that the two companies would be working together on custom x86 processors across both the data center and consumer PCs. The deal has apparently been decades in the making. It's not clear if we'll hear about consumer or enterprise chips, or both, but there's a good chance we'll hear something from the partnership.

- The 'future of real-time rendering' — Nvidia presented at GDC (not GTC) about neural rendering, but just a week later, the company is teasing that it will reveal the "future of real-time rendering" at GTC (not GDC). Maybe it's a new DLSS feature, maybe it's something completely new. We don't know, but Nvidia has already confirmed something is coming for gamers during the keynote.

- More on Vera Rubin — Nvidia officially launched its Vera Rubin NVL72 in January, and it started shipping samples to customers just weeks ago. These next-gen AI data center boards are on-track for the second half of the year, so we expect to hear a lot about them during the keynote.

- AI agents — Since the release of OpenClaw, the tech industry has been washed in talk of AI agents. Nvidia will talk about AI agents during the keynote, that much is almost guaranteed. We could see an announcement of "NemoClaw," which is an AI agent Nvidia is reportedly developing to compete with OpenClaw.

- Nvidia N1/N1X — Perhaps the biggest rumor around Nvidia over the past year has been the N1 and N1X, which are two SoCs reportedly being developed for the consumer market. Do we finally see a reveal at this year's GTC? Perhaps, but this is the last item on this list for a reason.

We're moments away from GTC 2026

Hello and welcome to 3DTested's live blog for the GTC 2026 keynote. Jensen Huang is moments away from taking the stage. Myself, Jake Roach, will be tending the blog while our very own Paul Alcorn and Jeffrey Kampman are on the ground to cover all of the announcements in real-time.

But AI diffusion models delivered awsome results! And that's why some years ago Nvidia decided to change tracks completely, while finding intermediate technologies and products to pay for the transition.

The idea is to completely replace the algorithmic/mathematical mix of simulation models for game (or in fact any visual) content with an approach that is purely based on machine learning models.

Perhaps still as an intermediate it might use the same inputs that most game engines deliver to GPUs, the 3D meshes and the textures, but then apply them not in the current algorithmic manner, but mostly as "prompts" for a render that's similar to diffusion and/or how they interpolate frames today.

If you took the famous teapot as geometry data, I guess it's quite easy to imagine how a "post-Phong-render" or even post-raytrace-render AI-PU might be able to generate something that looks way more naturalistic, even if it has nothing todo with what the designer of the teapot had in mind "exactly".

The problem might be that than an AI would really need the information that the triangle mesh is supposed to represent a teapot, to do a good job, identifying the teapot via a recogntion model using a simple internal trangle mesh render might work, but ideally you'd want to use the highest level of annoation you can get, which is from the brain of the game designer, if those are still around.

In short the result looking natural is vastly more important in a game, than the result being authentic or an exact reproduction of the designer's intent (or even the strict adherence to the triangle mesh). And who in Hollywood was ever interested in realism (warts!), when they sold art or illusion?

Of course modern scene data is slightly more complex than a teapot and re-interpreting a scene for which you have no game developer's abstracted story data, but Nvidia might want to sell a few generations of products and game developers would need to adapt, too.

If you look at video generation tools today, their abilty to produce short bursts of content that looks incredibly realistic, can be quite good, it's mostly when things get longer or scenes/perspectives change completely where things go off the rails. With NPCs or map data the huge currently unexploited advantage there is that their future actions, position, appearance etc. Can be known and thus "prompted" long before they need to be rendered, so errors don't need to accumulate as they do in current video generation models, where the prompt remains static.

It's with vast distributed multi-player games where predictability can fail, because the shared decisions can't be agreed until real-time has passed, where I see a new wave of jitters and bugs befalling their outputs. PvE could become really rather incredible, eventually, if the money keeps flowing.

So the biggest benefit may actually be for the content creation industry, where the last human actors may go the way of the dodo or go back to putting out a begging bowl during live performances somewhere where humans can offer them a ball of rice in return for their antics.

BTW: I have zero insights into what Nvidia actually does. This is pure BI (bio intelligence) hallucinations from hints dropped here and there.

P.S.: the change of approach was hinted at years ago. But the way I imagined it to go, it would have required changing the game engines to offer the high quality/high abstraction and temporally sorted metadata to the AI-PUs well in advance.

I've heard zero reports on say Valve adding such functionality to a future release of their engine, so either I'm very wrong, or they managed to pull up a pretty impressive cover-up.